See Virtuoso QA in Action - Try Interactive Demo

Understand TDD and BDD differences with practical examples. Discover when each fits your team and how AI-native platforms eliminate the maintenance burden.

The debate between Test Driven Development and Behavior Driven Development has shaped how engineering teams approach software quality for over two decades. TDD pushes developers to write tests before code, creating a tight feedback loop that catches defects at the unit level. BDD extends that discipline to the entire team, using plain language scenarios to align developers, testers, and business stakeholders around shared expectations of system behavior.

Both methodologies are powerful. Both have real limitations. And both are being fundamentally reshaped by AI-native testing platforms that deliver the core benefits of each approach while eliminating the overhead that has historically held teams back.

This guide breaks down TDD and BDD with practical depth, walks through real examples, and explains when each approach fits, including how modern AI capabilities are changing the calculus for enterprise teams.

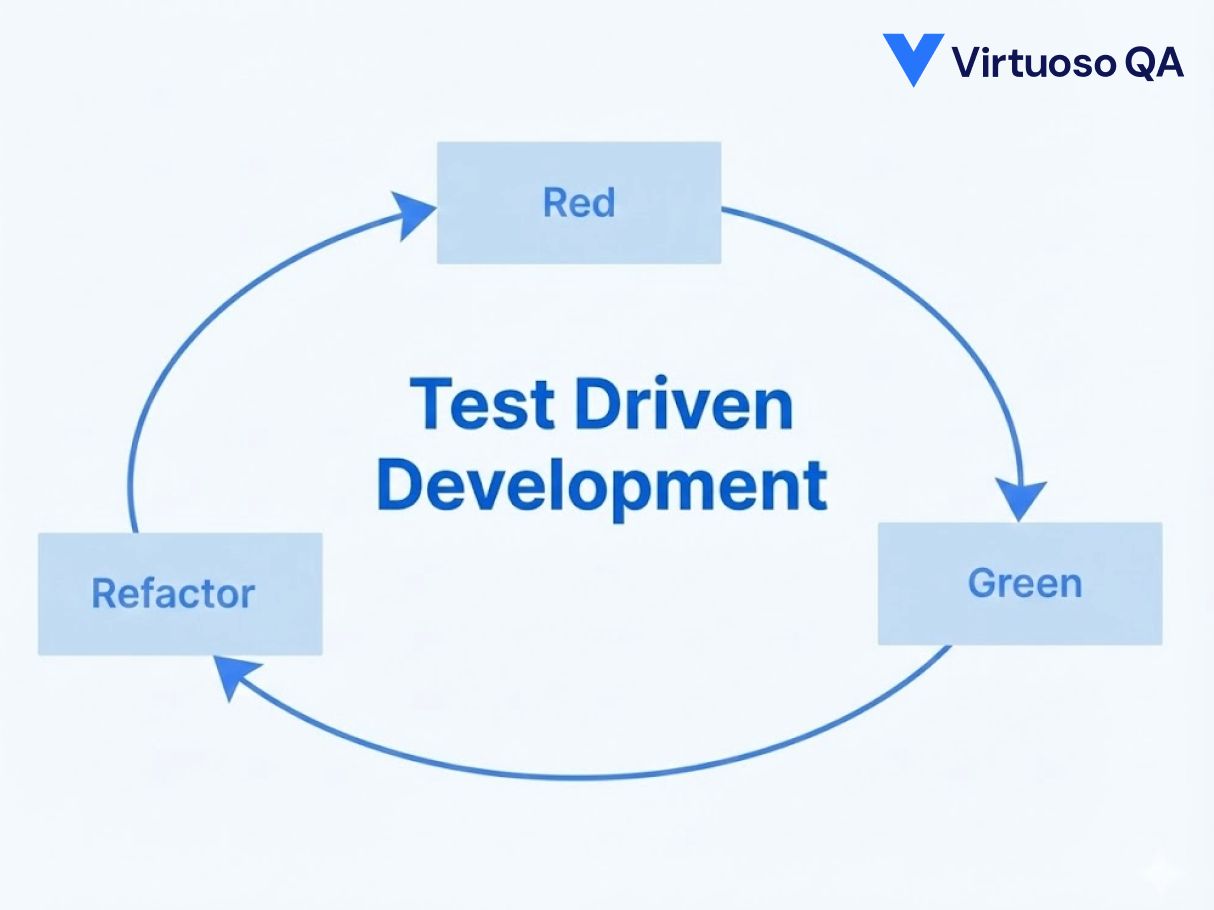

Test Driven Development is a software development methodology where automated tests are written before the production code they validate. The developer defines the expected behavior of a small unit of code through a test, watches the test fail, writes just enough code to make the test pass, then refactors for clarity and performance.

This cycle is known as Red, Green, Refactor. Red represents the failing test. Green represents the passing test after code implementation. Refactor represents improving the code structure without changing its behavior.

TDD is fundamentally a developer discipline. It enforces code correctness at the most granular level, creates a living specification of how the system works, and builds a comprehensive regression safety net that grows with every feature.

The TDD workflow follows a precise sequence that repeats for every new unit of functionality.

The developer defines what the code should do by writing a test that specifies inputs, expected outputs, and assertions. This test is expected to fail because the production code does not yet exist.

This is not a wasted step. Confirming the test fails validates that the test itself is correctly checking the intended behavior. It also verifies that the testing infrastructure, framework, and environment are functioning properly.

The developer writes only enough production code to satisfy the test. This promotes lean, focused implementations and prevents over engineering.

With the new test passing, the developer runs the full test suite to ensure no existing functionality is broken. The code is then refactored to improve readability, maintainability, and design, with the test suite providing a safety net against regressions.

This cycle repeats continuously. Over time, the team accumulates a comprehensive suite of unit tests that document system behavior and catch defects the moment they are introduced.

Consider a login function that validates user credentials. In TDD, the developer starts by writing a test:

def test_valid_credentials_return_success():

result = authenticate("user@example.com", "correct_password")

assert result.status == "success"

assert result.token is not None

def test_invalid_password_returns_failure():

result = authenticate("user@example.com", "wrong_password")

assert result.status == "failure"

assert result.token is None

Both tests fail initially. The developer then implements the authenticate function to satisfy these tests. After all tests pass, the code is refactored for production readiness. Additional tests are written for edge cases: empty credentials, locked accounts, expired passwords, and rate limiting.

Writing tests before code forces developers to think through expected behaviour upfront. Defects are caught at the unit level before they propagate.

The constraint of writing testable code naturally produces smaller, more modular functions with clear responsibilities.

Every feature ships with tests that catch regressions the moment they are introduced. The safety net grows automatically with every sprint.

When a test fails, the scope is narrow. Developers know exactly which unit broke and why, reducing investigation time from hours to minutes.

The test suite describes what every function does, how it handles edge cases, and what inputs produce what outputs. It stays current because failing tests force updates.

Behavior Driven Development is a methodology that extends TDD by shifting the focus from code correctness to system behavior as experienced by the end user. Instead of starting with technical test cases, BDD starts with collaborative conversations between developers, testers, and business stakeholders to define expected system behavior in a structured, human readable format.

BDD uses Gherkin syntax, a domain specific language built around Given, When, Then statements, to express behavior scenarios that anyone on the team can read and understand regardless of technical background.

The critical innovation of BDD is that it bridges the communication gap between technical and non technical stakeholders. Business analysts, product managers, and QA professionals can all participate in defining and validating system behavior without needing to read or write code.

Developers, testers, and business stakeholders gather to define expected behavior for a feature. This conversation surfaces edge cases, assumptions, and acceptance criteria that might otherwise be missed.

The team documents agreed behavior using Given, When, Then syntax. Each scenario describes a specific user interaction and its expected outcome.

Developers write code that maps each Gherkin step to executable test logic. These step definitions connect the human readable scenarios to actual application code.

With scenarios defined, the team follows the Red, Green, Refactor cycle. Scenarios fail initially, code is implemented to make them pass, and the code is refactored for quality.

Passing BDD scenarios serve as living documentation of system behavior. As requirements evolve, scenarios are updated collaboratively, keeping the entire team aligned.

Feature: Checkout Process

Scenario: Successful purchase with valid payment

Given the customer has items in their shopping cart

And the customer is on the checkout page

When the customer enters valid payment details

And the customer clicks "Place Order"

Then the order should be confirmed

And the customer should receive an order confirmation email

Scenario: Payment declined with insufficient funds

Given the customer has items in their shopping cart

And the customer is on the checkout page

When the customer enters a payment method with insufficient funds

And the customer clicks "Place Order"

Then the payment should be declined

And the customer should see an error message with retry options

These scenarios are readable by every stakeholder on the team. The product manager can verify that the expected behavior matches business requirements. The developer knows exactly what to build. The tester knows exactly what to validate.

Collaborative scenario writing surfaces misalignments before code is written, not after.

Gherkin scenarios describe system behaviour in plain language that business stakeholders, product managers, and QA can read and validate independently.

Acceptance criteria expressed as executable scenarios give stakeholders direct visibility into what was tested and whether it passed. No translation layer needed.

Starting from user behaviour rather than code structure naturally prioritises the workflows that matter most to the business.

Each scenario maps directly to a requirement, creating an audit trail from business need to test execution that regulated industries require.

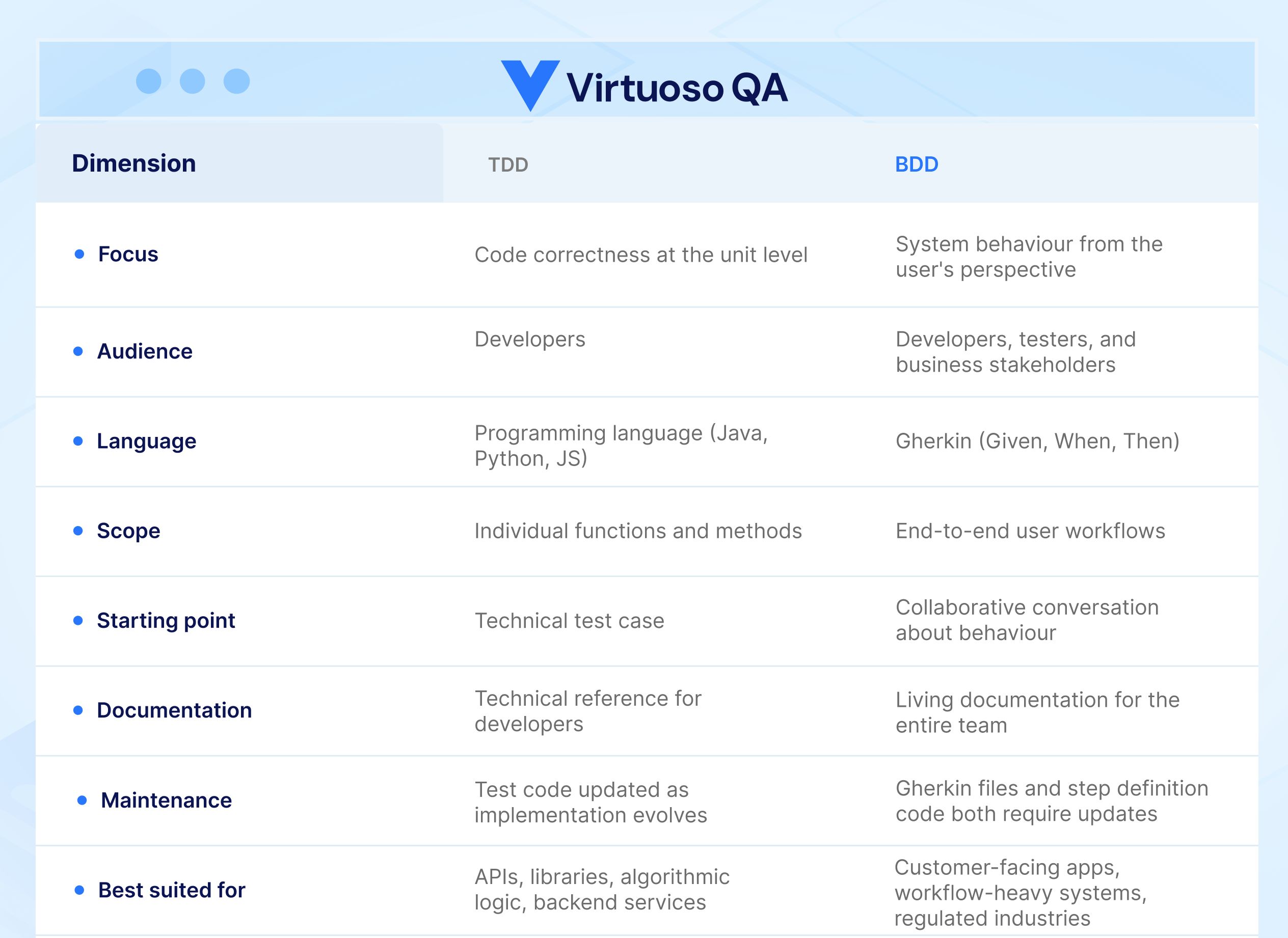

Understanding the core distinctions helps teams choose the right approach for their context.

TDD focuses on code correctness at the unit level. BDD focuses on system behavior from the user's perspective.

TDD is primarily a developer practice. BDD is a cross functional practice involving developers, testers, and business stakeholders.

TDD tests are written in programming languages specific to the application. BDD scenarios are written in Gherkin, a plain language format accessible to non technical team members.

TDD typically targets fine grained unit tests that validate individual functions or methods in isolation. BDD targets coarser grained scenarios that validate end to end user workflows.

TDD starts with a technical test case. BDD starts with a collaborative conversation about expected behavior.

TDD tests serve as technical documentation for developers. BDD scenarios serve as living documentation for the entire team, including business stakeholders.

TDD tests are maintained by developers as code evolves. BDD scenarios require maintenance of both Gherkin feature files and step definition code, creating a dual maintenance layer.

BDD and TDD are not mutually exclusive. BDD can incorporate TDD within its workflow, with BDD scenarios defining high level behavior and TDD tests validating the underlying code units.

TDD is the right choice when the primary concern is code quality and correctness at the unit level. It excels in scenarios where the development team needs a disciplined approach to writing reliable, well structured code with comprehensive regression coverage.

TDD works best when the team has strong development expertise, requirements are well defined at the technical level, the focus is on internal code quality rather than stakeholder alignment, and the application architecture benefits from rigorous unit level validation.

TDD is particularly effective for API development, library and framework development, algorithmic logic, and any context where precise, isolated validation of code behavior is the priority.

BDD is the right choice when cross functional alignment is critical and the team needs a shared language for defining and validating system behavior. It excels in environments where communication gaps between business and technical teams lead to misunderstandings, rework, and missed requirements.

BDD works best when multiple stakeholders need visibility into what the software does, acceptance criteria are expressed in business terms rather than technical specifications, the application involves complex user workflows that require end to end validation, and the organization values living documentation that evolves with the product.

BDD is particularly effective for customer facing applications, workflow heavy enterprise systems, regulated industries where traceability between requirements and tests is mandatory, and Agile teams practicing user story driven development.

Despite their strengths, both TDD and BDD face persistent challenges in enterprise environments that neither methodology was originally designed to solve.

TDD requires developers who are disciplined enough to write tests before code and skilled enough to design effective test cases. BDD requires teams to maintain both Gherkin scenarios and the step definition code that maps them to executable logic. Both demand specialized expertise that many teams lack.

As applications grow, test suites grow with them. TDD unit test suites can reach thousands of tests that must be maintained as code evolves. BDD step definitions must be updated whenever the application's behavior or UI changes. Industry data shows that teams spend 60% or more of their QA time on maintenance rather than new test creation, regardless of whether they use TDD, BDD, or both.

Implementing TDD and BDD requires configuring and maintaining testing frameworks, managing test data, integrating with CI/CD pipelines, and building reporting infrastructure. This overhead multiplies across large enterprise environments with dozens of applications and hundreds of team members.

BDD's promise is that scenarios are readable by everyone. In practice, Gherkin scenarios often become so technical and implementation specific that they lose their value as a communication tool. Step definitions become brittle glue code that breaks whenever the UI changes.

AI-native testing platforms do not replace TDD or BDD. They fulfill the original promises that both methodologies set out to deliver, while eliminating the overhead that prevents most teams from realizing those promises at scale.

BDD introduced the idea that tests should be written in language everyone understands. AI-native platforms take this further by enabling teams to write and execute tests in plain English without the intermediate step of coding step definitions. There is no Gherkin to maintain, no step definition code to debug, and no framework configuration to manage.

When a tester writes "Navigate to the login page, enter valid credentials, and verify the dashboard loads," an AI-native platform understands the intent, identifies the correct UI elements, and executes the test. This is BDD's vision of collaborative, accessible testing, delivered without the glue code.

The maintenance burden that plagues both TDD and BDD test suites is fundamentally a problem of brittle element identification. When the UI changes, tests break. AI-native self-healing technology solves this by using multiple identification techniques, including visual analysis, DOM structure, and contextual data, to automatically adapt tests when the application changes. With ~95% accuracy in auto-updating broken locators, the maintenance spiral that consumes 60% of QA time is reduced to a fraction.

Enterprise teams that have adopted self-healing automation report reduction in maintenance effort. That is time returned directly to coverage expansion, exploratory testing, and strategic quality work.

TDD's core principle is that tests should exist before code. AI-native platforms extend this by autonomously generating test steps from application analysis, requirements documents, and user stories. Virtuoso QA's StepIQ technology analyzes the application under test and suggests complete test steps based on UI elements, application context, and user behavior patterns.

This means teams can achieve comprehensive test coverage at a pace that manual TDD authoring cannot match.

For teams with existing BDD investments in Cucumber, SpecFlow, or similar frameworks, AI-native platforms offer migration pathways that convert legacy BDD test scripts into fully executable, self-healing test journeys. This preserves the behavioral logic captured in existing scenarios while eliminating the step definition maintenance burden that makes BDD implementations increasingly costly over time.

The choice is not binary. Here is how to think about it.

Use TDD when your team's primary goal is unit level code correctness, you have experienced developers who embrace test first discipline, and the application is primarily backend or algorithmic in nature.

Use BDD when cross functional alignment is the bottleneck, business stakeholders need direct visibility into test coverage, and regulatory traceability between requirements and tests is required.

Adopt AI-native testing when test maintenance is consuming more time than test creation, the skills gap prevents scaling automation across the team, you need to achieve comprehensive coverage within Agile sprint timelines, and your enterprise applications span complex business workflows across ERP, CRM, or custom web systems.

The most effective enterprise teams layer all three. TDD for unit level code quality. BDD principles for stakeholder alignment. AI-native platform like Virtuoso QA for end to end functional testing that scales without the maintenance burden.

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.