See Virtuoso QA in Action - Try Interactive Demo

Test cases are a set of conditions under which testers verify software functionality. With this guide, explore test case formats, examples, and templates.

A test case is a detailed set of conditions, actions, and expected results used to validate specific software functionality. It defines exactly what to test, how to test it, and what success looks like. Well-written test cases enable consistent, repeatable validation whether executed manually or automated. Traditional test case documentation requires extensive manual effort, becomes outdated quickly, and creates barriers between testers and stakeholders. AI-native testing platforms now enable teams to create executable test cases in natural language, eliminating documentation overhead while enabling business users to define validation scenarios without technical expertise, accelerating test creation by 80-90% while improving clarity and maintainability.

A test case is a specific set of conditions under which a tester determines whether software functions correctly. It documents the inputs, execution steps, preconditions, and expected outcomes required to validate a particular aspect of application functionality.

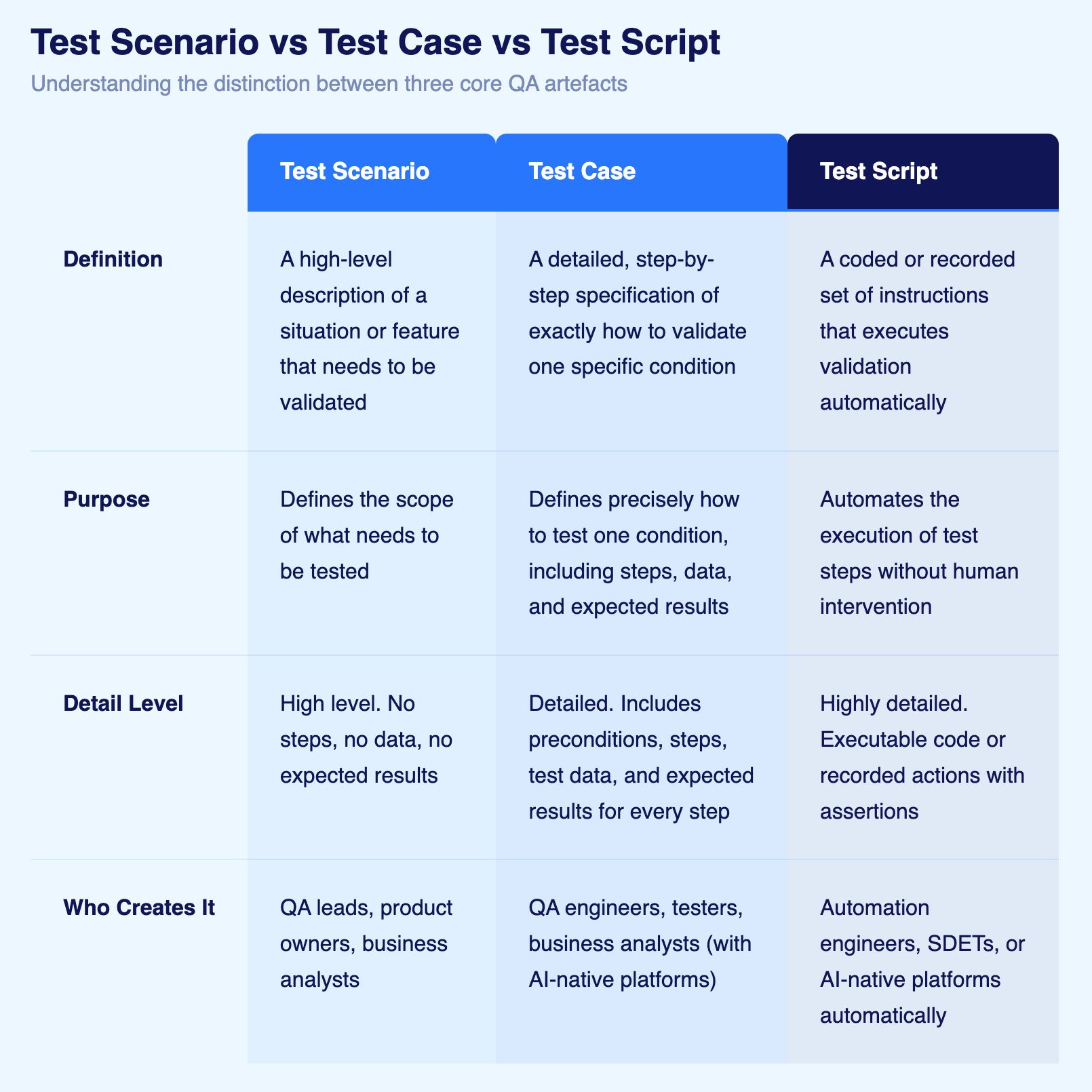

These three terms are frequently used interchangeably and mean different things. Confusing them leads to poorly scoped test planning and gaps in coverage.

Software can fail in more ways than most teams plan for. A feature can work correctly but crash under load. An interface can function as designed but be unusable for someone relying on a screen reader. A database can accept inputs correctly but return inconsistent results under concurrent queries. Different types of test cases exist because different failure modes require different validation approaches. Knowing which types apply to your context is what separates intentional test coverage from accidental coverage.

Functional test cases verify that specific features of an application work as designed. They are the most common type and the natural starting point for any test suite because they map directly to requirements, user stories, and acceptance criteria.

A functional test case for a login feature would verify that a registered user can enter valid credentials and reach the dashboard. It checks the output against the expected behaviour, nothing more.

Examples:

Integration test cases verify that separate components of a system work correctly together. Individual modules may pass functional tests in isolation and still break when they interact. Integration testing finds the failures that live in the connections, not the components.

These tests are especially important in architectures with microservices, third-party APIs, or multiple databases, where integration points multiply and each one is a potential failure.

Examples:

User interface (UI) test cases verify that the visual layer of an application renders correctly and responds to user interaction as expected. A feature can work perfectly in the backend and still fail if the button does not render, the form field does not accept input, or an error message appears in the wrong location.

These tests are particularly critical after UI redesigns, framework migrations, or CSS updates, where visual behaviour can change without any functional code changing.

Examples:

Usability test cases evaluate whether an application is intuitive to use. Unlike functional or UI tests that check whether things work, usability tests assess whether real users can accomplish their goals without friction, confusion, or the need for documentation.

These tests require testers to adopt a user perspective rather than a QA perspective. The question is not "does it work?" but "can someone unfamiliar with this application figure out how to use it?"

Examples:

Accessibility test cases verify that an application can be used by people with disabilities, including those who rely on screen readers, keyboard navigation, voice controls, or other assistive technologies. They also validate compliance with standards such as WCAG (Web Content Accessibility Guidelines), the European Accessibility Act (EAA), and the Americans with Disabilities Act (ADA).

Accessibility failures are rarely visible in functional testing. An application can pass every functional test and still be completely unusable for a significant portion of the population.

Examples:

Performance test cases measure how a system behaves under varying levels of load and stress. They validate speed, responsiveness, stability, and resource usage. An application may work correctly with five users and fail silently with five thousand.

Performance testing catches bottlenecks, memory leaks, and scalability limits before real users experience them in production.

Examples:

Security test cases identify vulnerabilities that could be exploited by attackers. They simulate known attack vectors including SQL injection, cross-site scripting, authentication bypass, and unauthorised data access. A feature can pass all functional tests while containing a critical vulnerability that exposes customer data.

In regulated industries, security test cases are not optional. GDPR, HIPAA, PCI DSS, and similar frameworks require demonstrable security testing as part of compliance.

Examples:

Database test cases verify that data is stored, retrieved, updated, and deleted correctly. They ensure data integrity, consistency, and accuracy at the persistence layer. Application-level tests may pass while the underlying data is being written incorrectly, duplicated, or silently lost.

These tests are critical for financial systems, healthcare records, and any application where data accuracy has direct real-world consequences.

Examples:

Regression test cases verify that existing functionality continues to work correctly after code changes. Every new feature, bug fix, or dependency update is a potential source of unintended side effects. Regression testing is the mechanism that catches those side effects before they reach users.

Regression suites grow with each release and are the primary candidates for automation, since they must be executed repeatedly and consistently.

Examples:

User acceptance test cases verify that software meets actual business requirements from the perspective of the end user or business stakeholder. They are the final validation before release, confirming not just that the system works technically but that it delivers what was needed.

UAT is typically performed by business users, product owners, or QA team members adopting the user perspective. These tests reflect real-world workflows, including variations and edge cases that technical testing may not anticipate.

Examples:

The types above define what you are testing. There is a second dimension that defines how you approach the test: whether you are confirming expected behaviour, challenging the system with invalid inputs, or pushing it beyond its intended boundaries.

Any test type can be written in all three approaches. A login test demonstrates this clearly:

A balanced test suite covers all three approaches across all relevant types. Teams that only write positive test cases discover their system works under ideal conditions. Teams that include negative and destructive tests discover how their system behaves when conditions are not ideal, which is when production failures actually occur.

Unique identifier enabling reference and tracking. Use clear naming conventions like TC-001, TC-LOGIN-01, or USER-REG-001.

Example: TC-CHECKOUT-CC-01 (Test Case for Checkout using Credit Card, first scenario)

Concise, descriptive summary of what the test validates. Use action-oriented language clearly stating the scenario.

Brief explanation of test purpose and scope. Provides context beyond the title.

Example: "Verify that registered users can successfully complete purchases using valid credit card payment, receive order confirmation, and see order in purchase history."

Conditions that must exist before test execution begins. Includes system state, user accounts, test data, and configuration requirements.

Examples:

Specific data values used during test execution. Eliminates ambiguity and ensures consistent test execution.

Examples:

Sequential actions the tester performs. Each step describes one specific action in clear, unambiguous language.

Format:

Explicit description of correct system behavior for each step. Defines success criteria so testers know whether tests passed or failed.

Examples:

Space for documenting what actually happened during execution. Completed during test execution, not during test case creation.

Test execution outcome: Pass, Fail, Blocked, or Skipped.

Definitions:

Business importance indicating testing sequence. Typically High, Medium, or Low (or P1, P2, P3).

Priority Factors:

Links to user stories, requirements documents, or acceptance criteria this test validates. Enables traceability.

Example: Requirements: US-123, AC-456

Traditional format with detailed step-by-step instructions. Most common format for manual test execution.

Test Case ID: TC-LOGIN-001

Test Case Name: User Login with Valid Credentials

Priority: High

Related Requirements: US-101 (User Authentication)

Preconditions:

- User account exists with username "user@example.com" and password "ValidPass123"

- Application is accessible and running

- User is logged out

Test Data:

- Username: user@example.com

- Password: ValidPass123

Test Steps:

Step 1: Navigate to application URL (https://app.example.com)

Expected Result: Application homepage loads with "Login" button visible in top right corner

Step 2: Click "Login" button

Expected Result: Login page displays with username field, password field, and "Submit" button

Step 3: Enter username "user@example.com" in username field

Expected Result: Username field accepts input and displays entered value

Step 4: Enter password "ValidPass123" in password field

Expected Result: Password field accepts input and displays masked characters (dots or asterisks)

Step 5: Click "Submit" button

Expected Result:

- User redirects to dashboard page

- Welcome message displays: "Welcome, Test User"

- User menu shows logged-in state with user email

- Session token created (verify via browser developer tools if needed)

Actual Results: [To be filled during execution]

Status: [Pass/Fail/Blocked]

Executed By: _____________

Date: _____________

Notes: _____________

Uses Given-When-Then structure emphasizing behavior specification. Popular in Agile teams practicing BDD.

Feature: User Authentication

Scenario: Successful login with valid credentials

Given the user is on the login page

And the user has a registered account with username "user@example.com"

When the user enters username "user@example.com"

And the user enters password "ValidPass123"

And the user clicks the "Submit" button

Then the user should be redirected to the dashboard

And the welcome message should display "Welcome, Test User"

And the user menu should show the user email "user@example.com"

And a session token should be created

Priority: High

Requirements: US-101

Test Data: See attached data file

Lightweight format for exploratory testing sessions. Defines mission, time box, and areas to explore without prescriptive steps.

Charter: Explore checkout process for payment handling edge cases

Time Box: 90 minutes

Mission:

Investigate how the checkout process handles various payment scenarios beyond happy path, focusing on edge cases and unusual inputs.

Areas to Explore:

- Expired credit cards

- Declined transactions

- Network timeouts during payment

- Multiple rapid payment submissions

- Browser back button during payment processing

- Session timeout during checkout

- Invalid CVV codes

- International credit cards

- Alternative payment methods

Success Criteria:

- Document all edge cases discovered

- Log defects for any error handling issues

- Identify usability problems in error messages

- Verify security controls around payment data

Notes: [Documented during session]

Defects Found: [List defect IDs]

Questions Raised: [Areas requiring clarification]

Simplified format listing validations without detailed steps. Useful for smoke testing or experienced testers.

Feature: User Registration - Smoke Test Checklist

Test Conditions to Verify:

☐ Registration form loads with all required fields

☐ Email validation prevents invalid email formats

☐ Password strength indicator shows real-time feedback

☐ "Username already exists" error displays for duplicate usernames

☐ Successful registration creates user account in database

☐ Confirmation email sends within 60 seconds

☐ User can log in immediately after registration

☐ User profile page displays correct information

☐ Account appears in admin user management panel

Priority: Critical

Requirements: US-200 series

Execution Time: ~15 minutes

Write test cases in simple, unambiguous language anyone can understand. Avoid technical jargon unless writing for technical audiences.

Good: "Click the 'Add to Cart' button located below the product image"

Poor: "Trigger the onclick event handler for the DOM element with class 'btn-cart'"

Each test step should represent one action. Don't combine multiple actions into single steps.

Wrong: "Login and navigate to settings and update password"

Right:

Don't assume testers know what should happen. Explicitly state expected outcomes for each action.

Incomplete: "Click login button"

Complete: "Click login button → Expected: User redirects to dashboard page with welcome message displayed"

Provide specific, realistic test data rather than placeholders or generic values.

Vague: "Enter a valid email address"

Specific: "Enter email address: customer@example.com"

Adjust technical detail based on who executes tests. Manual testers need explicit instructions. Experienced testers can handle higher-level descriptions.

For Manual Testers: "Click the blue 'Submit' button at the bottom right of the form"

For Experienced Testers: "Submit the registration form"

Document everything that must be true before testing begins. Don't assume testers know setup requirements.

Example Preconditions:

Link every test case to specific requirements or user stories. This traceability ensures complete requirements coverage.

Each test should execute independently without depending on other tests running first. Avoid test dependencies creating fragile test suites.

Wrong: Test Case 2 assumes Test Case 1 already created a user account

Right: Test Case 2 explicitly creates required user account in preconditions or setup

Problem: "Check that the system works correctly"

Solution: "Verify order confirmation displays order number, total amount, estimated delivery date, and shipping address"

Problem: Step describes action without stating what should happen

Solution: Every step includes explicit expected result defining success

Problem: One test case validates login, profile update, and logout in single test

Solution: Create separate test cases for each scenario enabling targeted testing

Problem: "Enter valid credentials"

Solution: "Enter username: test@example.com, password: TestPass123"

Problem: Steps assume tester knows navigation, system quirks, or business rules

Solution: Document all information needed for successful test execution

Problem: Test cases filled with technical jargon incomprehensible to business stakeholders

Solution: Use plain language stakeholders understand while maintaining precision

Test Case: Domestic Wire Transfer - Happy Path

Preconditions:

- Customer logged into online banking

- Source account (Checking #1234) has available balance of $50,000

- Destination account verified and saved

Test Data:

- Source Account: Checking #1234 (Balance: $50,000)

- Destination Account: 987654321 (Routing: 021000021)

- Transfer Amount: $5,000

- Transfer Date: Same day

Steps:

1. Navigate to "Transfers" section

Expected: Transfer page displays with transfer types listed

2. Select "Wire Transfer"

Expected: Wire transfer form displays with source/destination fields

3. Select source account "Checking #1234"

Expected: Current balance displays ($50,000)

4. Enter destination account "987654321" and routing "021000021"

Expected: System validates routing number, displays bank name

5. Enter amount "$5,000"

Expected: System validates sufficient funds, displays fees ($25)

6. Review transfer details and confirm

Expected: Confirmation screen shows all details for review

7. Authorize with secure code

Expected: Wire processes successfully, confirmation number generates

8. Verify account balances update

Expected: Source account shows $45,025 ($5,000 + $25 fee deducted)

Pass Criteria: Transfer completes, balances update, confirmation received

Test Case: Nurse Administers Scheduled Medication

Preconditions:

- Nurse logged into EHR system

- Patient admitted with active orders

- Scheduled medication due for administration

Test Data:

- Patient: John Smith (MRN: 12345)

- Medication: Lisinopril 10mg oral daily

- Administration Time: 08:00

Steps:

1. Scan patient wristband

Expected: Patient record displays with active orders

2. Navigate to Medication Administration Record (MAR)

Expected: MAR displays scheduled medications for current shift

3. Select Lisinopril 10mg from scheduled list

Expected: Medication details display with barcode scan prompt

4. Scan medication barcode

Expected: System verifies 5 rights (right patient, drug, dose, route, time)

5. Document administration

Expected: System timestamps administration, updates MAR status

6. Verify MAR updates

Expected: Medication marked "Given" with nurse signature and timestamp

Clinical Safety Validation: System prevents wrong medication, wrong dose, wrong patient

Test Case: In-Store Return of Online Purchase

Preconditions:

- Customer purchased item online 5 days ago (Order #12345)

- Item delivered and in original packaging

- Return window is 30 days

Test Data:

- Order Number: 12345

- Item: Wireless Mouse (SKU: WM-001)

- Original Price: $49.99

- Return Reason: Changed mind

Steps:

1. Store associate scans order number or email lookup

Expected: Order details display with eligible return items

2. Associate selects item for return

Expected: Return policy displays (30-day window confirmed)

3. Customer selects return reason "Changed mind"

Expected: System validates item condition requirements

4. Associate inspects item and confirms condition

Expected: System generates return authorization

5. System processes refund to original payment method

Expected: Refund confirmation displays, estimated 5-7 business days

6. Customer receives return receipt

Expected: Receipt shows returned item, refund amount, timeline

Validation: Inventory updates, order status changes, refund processes correctly

Traditional test case documentation creates bottlenecks through manual writing, constant maintenance, and technical barriers limiting who can create tests.

AI-native platforms enable teams to write test cases in plain English without formal documentation structures or technical expertise.

Traditional Approach:

AI-Native Approach:

Verify customers can purchase products with saved credit cards

1. Login as existing customer

2. Add product to cart

3. Proceed to checkout

4. Select saved payment method

5. Confirm order

6. Verify order confirmation displays

7. Check email for confirmation

AI platform translates natural language into executable tests automatically. No formal templates. No technical barriers. Business users create tests.

AI-powered self-healing eliminates test case maintenance burden. When applications change, test cases adapt automatically without manual updates.

Traditional Challenge: Application changes require updating hundreds of test cases manually

AI-Native Solution: 95% self-healing accuracy means test cases continue working despite application changes

Test cases become living documentation automatically updated as tests execute. Results, screenshots, and execution evidence capture system behavior automatically.

Group related test cases by feature, user workflow, or business process. Logical organization enables efficient test selection and execution.

Store test cases in version control systems alongside code. Track changes, enable collaboration, and maintain history.

Schedule periodic reviews removing obsolete test cases, updating outdated scenarios, and identifying coverage gaps.

Link every test case to specific requirements ensuring complete validation coverage and supporting compliance audits.

Standardize test case IDs, names, and organization enabling team members to locate and reference tests easily.

Track which test cases find defects, which rarely execute, and which require frequent maintenance. Optimize test suites based on data.

Test case authorship is not the exclusive domain of QA engineers. In practice, who writes test cases depends on team structure, methodology, and the tools available.

Thy are the primary authors in most organisations. They translate requirements and user stories into structured test cases, define preconditions and test data, and build the regression suites that protect existing functionality across releases. Their testing expertise shapes coverage decisions on what to test, at what depth, and in what priority order.

They combine software engineering and QA skills. They write test cases and convert them directly into automated test scripts, working closely with developers to embed testing into the build and deployment process. In organisations with mature CI/CD pipelines, SDETs are responsible for keeping automated regression suites current.

They write test cases in teams practising behaviour-driven development (BDD). Because BDD uses natural language in the Given-When-Then format, business stakeholders can define acceptance test cases directly from user stories without technical expertise. These cases then inform both manual testing and automated validation.

Developers write test cases primarily at the unit and integration level. They are responsible for testing the code they write, catching defects before changes reach the QA phase. In Agile teams, the line between developer and tester has blurred significantly, with developers increasingly contributing to functional and integration test case libraries.

They can now write test cases on AI-native test platforms that accept plain English as input. A product manager who understands a business workflow can describe it in natural language and have it converted into an executable test automatically. This removes the bottleneck between business knowledge and test coverage, allowing teams to scale authorship without scaling headcount.

In Agile and Scrum environments, test case authorship is a shared responsibility. Sprint planning sessions involve QA, development, and product stakeholders jointly defining acceptance criteria, which become the basis for test cases written during the sprint. The traditional model of QA writing all test cases after development finishes has been replaced in most modern teams by collaborative authorship throughout the delivery cycle.

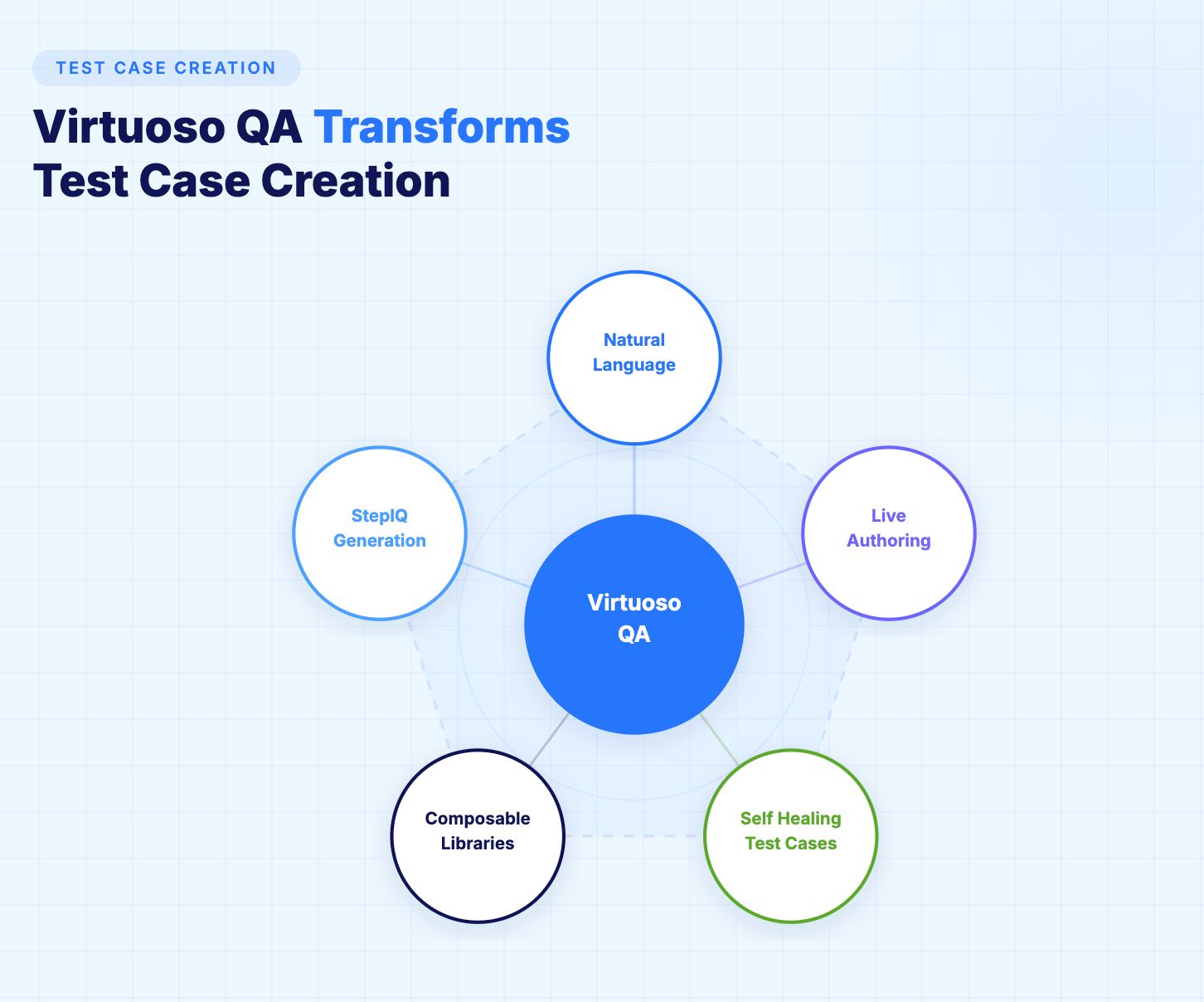

Virtuoso QA eliminates traditional test case documentation overhead through natural language test creation enabling business users to define validation without technical expertise.

Describe test cases in plain English using Virtuoso QA's intuitive interface. AI translates descriptions into executable tests automatically.

As teams create test cases, Virtuoso QA validates steps against actual applications in real time. Incorrect steps highlighted immediately.

Virtuoso QA analyzes applications and suggests test steps automatically. Describe what to validate; StepIQ generates how to test it.

Build reusable test case components shared across teams. Common workflows become building blocks accelerating test case creation.

95% self-healing accuracy means test cases adapt automatically to application changes without maintenance.

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.