See Virtuoso QA in Action - Try Interactive Demo

Learn how to write effective manual test cases with clear steps, data, and expected results, and see how AI platforms convert them into automated tests.

A test case is a documented set of conditions, steps, and expected outcomes that determines whether a specific feature or function of an application is working correctly. It gives testers precise instructions for what to do, what data to use, and what result to expect, so that any tester can execute the same validation and reach the same conclusion.

Test cases are the foundation of structured software testing. Without them, testing relies on individual memory and judgement, which produces inconsistent results and leaves gaps in coverage that only surface in production.

Well-written test cases serve three purposes simultaneously: they guide execution during testing, they document what has been validated and what has not, and they provide the raw material that AI-native platforms like Virtuoso QA convert into automated tests without manual rewriting.

Test cases are structured documents specifying exactly how to validate specific aspects of application functionality. Each test case defines preconditions, test steps, test data, expected results, and postconditions, enabling any tester to execute validation consistently and compare actual outcomes against expected behavior.

Comprehensive test cases serve multiple critical purposes. They provide reproducible validation ensuring the same tests execute consistently across different testers and test cycles. They create documentation of testing coverage proving which functionality has been validated and identifying gaps requiring additional testing. They facilitate knowledge transfer enabling new team members to understand validation approaches without relying entirely on experienced testers' institutional knowledge.

Test cases also enable traceability linking validation activities to requirements, user stories, or acceptance criteria. Regulatory compliance often mandates proving specific requirements have been tested, making well-documented test cases essential evidence. Finally, test cases provide the foundation for automation, defining the validation logic that automated tests will execute repeatedly.

Manual test case execution faces inherent limitations. Human testers executing hundreds of test cases manually introduces errors, inconsistencies, and missed steps. Regression testing requiring re-execution of the same test cases for every release consumes enormous time and resources. Organizations find themselves hiring armies of manual testers to execute test suites fast enough to keep pace with release schedules.

The mathematics become prohibitive. If a regression suite contains 1,000 test cases, each requiring 10 minutes of manual execution, complete execution consumes 167 hours. A single tester working 8-hour days needs 21 days for one complete regression cycle. Enterprises releasing monthly cannot sustain this timeline with manual execution.

Modern AI native testing platforms eliminate the manual execution burden while preserving the value of test case documentation. Organizations convert existing manual test cases into executable automated tests instantly, maintaining validation logic and coverage while removing repetitive human execution. This transformation enables comprehensive testing at velocity impossible with purely manual approaches.

Test cases are not one-size-fits-all. Different types serve different purposes within a testing programme, and knowing which type to write for a given situation determines whether testing effort is directed at the right risks.

Functional test cases verify that features behave according to their specifications. They are the most common type and form the backbone of any test suite. A functional test case for a login feature verifies that valid credentials produce a successful login, not that the page loads quickly or looks correct.

Non-functional test cases validate attributes like performance, security, usability, and compatibility rather than specific features. A performance test case might verify that a search results page loads within two seconds under a defined concurrent user load. These cases are distinct from functional ones because they measure quality characteristics rather than feature behaviour.

Positive test cases use valid inputs to verify the system produces the expected correct outcome. They confirm the happy path works as intended. A positive test case for a registration form submits all required fields with valid data and verifies the account is created successfully.

Negative test cases use invalid inputs to verify the system handles errors gracefully. They confirm the application rejects bad data with appropriate messages rather than crashing or producing unexpected behaviour. A negative test case for the same registration form submits an invalid email format and verifies the correct validation error appears.

Boundary test cases target the edges of valid input ranges, where defects cluster most frequently. For a field accepting passwords between 8 and 64 characters, boundary test cases verify behaviour at exactly 7, 8, 64, and 65 characters. These cases catch the off-by-one errors and incorrect comparison operators that functional testing routinely misses.

Regression test cases verify that previously working functionality continues to work after a code change. They are executed after every significant change to confirm nothing that worked before has broken. Regression test cases form the core of automated test suites because they need to run repeatedly without modification.

Smoke test cases are a minimal subset of critical tests executed immediately after a build to confirm the application is stable enough for further testing. If smoke tests fail, testing stops and the build is rejected. They cover only the most fundamental functionality and execute quickly.

Integration test cases verify that separate components work correctly together. Where unit tests validate components in isolation, integration test cases validate the interactions between them: data flowing correctly between services, APIs returning expected responses to the application, and database writes producing the correct state.

User acceptance test cases validate that the application meets business requirements from an end user's perspective. They are typically written and executed by business stakeholders or QA teams working from acceptance criteria rather than technical specifications. A UAT test case verifies a complete business workflow rather than an isolated feature.

Usability test cases evaluate whether users can navigate and operate the application without difficulty or confusion. They assess clarity of error messages, discoverability of features, accessibility of interactive elements, and overall ease of use rather than functional correctness.

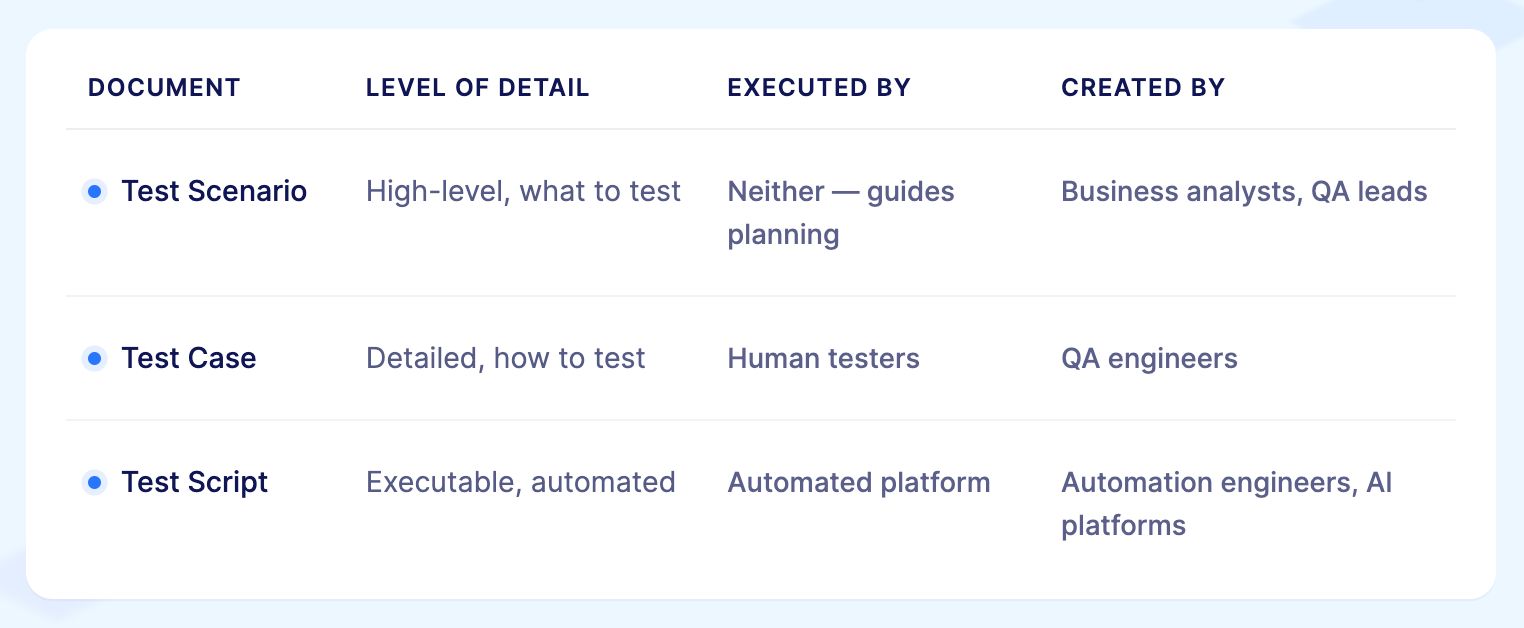

These three terms are used interchangeably in some teams and treated as completely distinct in others. Understanding the difference prevents miscommunication and ensures the right level of documentation is produced for each situation.

A test scenario is a high-level description of what needs to be validated. It describes the user goal or system behaviour being tested without specifying the exact steps.

"Verify that a user can log in to the application" is a test scenario. It tells you what to test, not how to test it. A single test scenario typically generates multiple test cases covering different conditions.

A test case is a detailed, specific implementation of a test scenario. It specifies the exact preconditions, step-by-step actions, test data, and expected results required to execute one particular validation.

"Verify that a user with valid credentials is redirected to the dashboard after clicking Sign In" is a test case derived from the login scenario. It tells you exactly what to do and what to look for.

A test script is an automated version of a test case written in a programming language or expressed in natural language for an AI-native platform. Where a test case describes what a human tester should do, a test script contains instructions that a machine can execute. A Selenium test script or a Virtuoso QA natural language journey is a test script. It automates the execution of the logic defined in the test case.

Related Read: Test Case vs Test Scenario - What They Are, How They Differ

Well-structured test cases follow consistent formats ensuring clarity and completeness. Understanding each component enables writing test cases that others can execute reliably.

Every test case requires unique identification enabling precise reference in test plans, defect reports, and traceability matrices. Identifiers typically follow patterns like TC001, REGRESSION_LOGIN_001, or MODULE_FEATURE_001, incorporating system, module, or feature context for clarity.

Concise, descriptive titles immediately communicate what the test validates. Effective titles use action verbs and specify the exact scenario: "Verify successful user login with valid credentials," "Validate error message for invalid email format," "Confirm order total calculation with multiple discounts." Poor titles like "Login test" or "Test 1" provide insufficient context.

Brief descriptions provide additional context beyond titles, explaining the test's purpose and what aspect of functionality it validates. For example: "This test verifies that users can successfully authenticate using registered email addresses and passwords, gaining access to their account dashboard upon successful login."

Preconditions specify the system state and data that must exist before test execution begins. "User account exists in database with email test@example.com and password Test123!," "Application is deployed to QA environment," "Test database contains sample product catalog," "User is logged out of the application."

Clearly defined preconditions ensure testers can establish appropriate starting conditions rather than encountering test failures due to improper setup.

Test steps provide sequential instructions for executing the test. Each step should be specific, actionable, and unambiguous. Rather than "Login to application," write "Navigate to login page at https://app.example.com/login, Enter 'test@example.com' in Email field, Enter 'Test123!' in Password field, Click 'Sign In' button."

Granular steps eliminate ambiguity. When tests fail, specific steps enable identifying exactly where execution diverged from expected behavior.

Specify exact test data required for execution. "Email: test@example.com," "Password: Test123!," "Product SKU: WIDGET-001," "Discount Code: SAVE20." Documenting test data ensures reproducibility and enables maintaining test data separately from test steps for reusability.

Expected results define correct system behavior after executing each test step or at test completion. "User redirects to dashboard at https://app.example.com/dashboard," "Welcome message displays: 'Welcome back, John Doe'," "Session cookie sets with 24-hour expiration," "Last login timestamp updates in database."

Precise expected results enable objective pass/fail determination rather than subjective judgment about whether behavior seems correct.

During test execution, testers document actual observed behavior. When actual results match expected results, tests pass. Discrepancies indicate defects requiring investigation and remediation.

Test cases maintain status throughout their lifecycle: Draft (being written), Ready for Review, Approved, Blocked (cannot execute due to dependencies), Pass (executed successfully), Fail (defect identified), Skip (not applicable for current release).

Priority indicates execution order and importance. Critical tests validate fundamental functionality like user authentication or payment processing. High priority tests cover major features. Medium and low priority tests address secondary functionality or edge cases.

Test case priority informs regression testing strategies when time constraints prevent executing complete test suites.

Creating high-quality test cases requires systematic approaches ensuring completeness, clarity, and maintainability.

Before writing test cases, achieve complete understanding of functionality being tested. Review requirements documents, user stories, acceptance criteria, design specifications, and prototypes. Clarify ambiguities with product owners and developers. Test cases can only validate functionality testers understand clearly.

For a user registration feature, understand: What information must users provide? What validation rules apply to each field? What happens after successful registration? What error messages display for invalid input? How does registration integrate with email verification?

Test scenarios represent high-level descriptions of what to test. For user registration: "User registers with valid information," "User attempts registration with existing email," "User submits form with invalid email format," "User leaves required fields empty," "User password fails complexity requirements."

Comprehensive scenario identification ensures test coverage across happy paths, error conditions, boundary cases, and integration points.

Transform scenarios into specific, executable steps. The scenario "User registers with valid information" becomes:

Test Case: TC_REG_001 - Verify successful user registration with valid data

Preconditions: Application is accessible, test database is empty

Steps:

Expected Results:

Document all test data needed for execution. For registration testing: valid names, valid email addresses, invalid email formats, weak passwords, strong passwords, special characters, boundary length values for each field.

Maintaining test data separately from test cases enables reusability and easier updates when data requirements change.

Vague expected results create interpretation ambiguity. Rather than "User should be able to register," specify exactly what success looks like: confirmation message text, navigation destination, email content, database state changes, API responses.

Precise expected results enable objective pass/fail determination and provide developers with clear defect reproduction steps when tests fail.

Peer review improves test case quality. Have teammates review test cases for clarity, completeness, and accuracy. Do steps make sense to someone unfamiliar with the feature? Are preconditions achievable? Are expected results verifiable? Does the test case actually validate what it claims to test?

Related Read: Test Case Review - Steps, Checklist, Types and Best Practices

Group test cases logically by feature, module, or user workflow. Maintain test suites for different testing types: smoke tests, regression tests, acceptance tests. Update test cases when requirements change, ensuring documentation remains accurate.

Effective templates provide structure ensuring consistency and completeness across all test cases.

Test Case ID: [Unique identifier]

Test Case Title: [Concise descriptive title]

Test Description: [Brief explanation of purpose]

Module/Feature: [Application area being tested]

Priority: [Critical/High/Medium/Low]

Preconditions: [Required system state and data]

Test Steps:

1. [First specific action]

2. [Second specific action]

3. [Continue for all steps]

Test Data:

- [List all required data inputs]

Expected Results:

- [Specific expected outcome for each critical step]

- [Final expected state after test completion]

Actual Results: [To be completed during execution]

Status: [Pass/Fail/Blocked/Skip]

Notes: [Any additional observations]

TC_LOGIN_001 - Verify successful login with valid credentials

Preconditions: User account exists (email: testuser@example.com, password: Test123!)

Steps:

Expected Results:

TC_LOGIN_002 - Verify error message for invalid password

Preconditions: User account exists (email: testuser@example.com)

Steps:

Expected Results:

Related Read: Login Page Test Cases: Functional, Security & API Testing

TC_CHECKOUT_001 - Verify successful order placement

Preconditions:

Steps:

Expected Results:

Related Read: Test Cases for Add to Cart Functionality: Examples for Ecommerce Checkout Testing

TC_SEARCH_001 - Verify search returns relevant results for a valid query

Preconditions:

Steps:

Expected Results:

Related Read: Search Feature Test Cases: Positive, Negative & More

Following established best practices improves test case quality, maintainability, and value.

Test cases should be understandable to anyone on the QA team, not just the author. Use simple, direct language. Avoid jargon unless it is standard terminology everyone understands. Each test case should validate one specific aspect of functionality rather than combining multiple unrelated tests.

Test cases should execute independently without depending on other test cases running first. Each test establishes its own preconditions rather than assuming a previous test left the system in a particular state. This independence enables parallel execution and reordering test sequences without breaks.

Positive test cases verify systems work correctly with valid inputs. Negative test cases verify systems handle invalid inputs gracefully. Comprehensive testing requires both. For every valid input scenario, consider invalid alternatives: wrong data types, missing required fields, values outside acceptable ranges, boundary conditions.

Test cases decay when applications evolve but documentation does not. Establish processes for updating test cases when requirements change, user interfaces are redesigned, or business logic updates. Outdated test cases waste execution time and create false defect reports.

Consistent naming enables quick identification of test scope and purpose. Include module names, feature identifiers, and scenario types in test case IDs. "AUTH_LOGIN_VALID_001" immediately indicates authentication module, login feature, valid credentials scenario.

Make implicit assumptions explicit. If a test assumes browser cookies are enabled, document that assumption. If the test requires specific user permissions, state that explicitly. Documented assumptions prevent confusion when tests fail due to unmet preconditions.

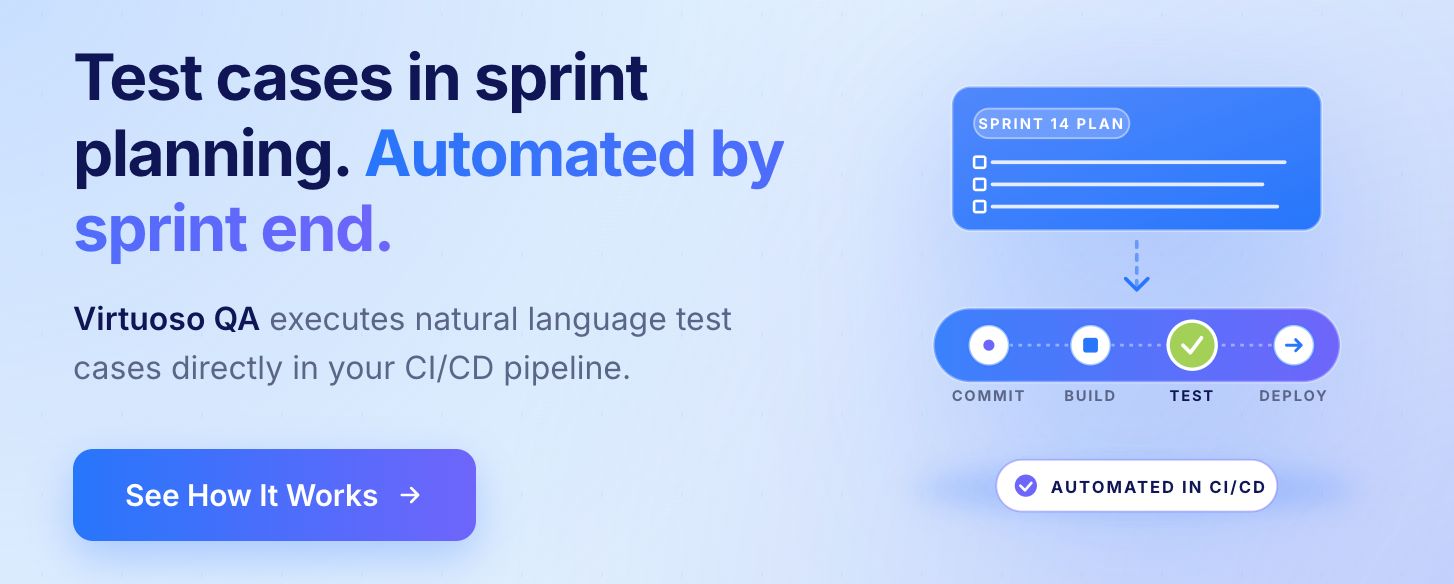

Test case writing in Agile environments follows the same principles as traditional testing but operates under different constraints and serves a different rhythm. Understanding how test cases fit into sprint delivery and CI/CD pipelines prevents the most common failure mode: test cases that are written too late to influence development and maintained too infrequently to remain useful.

In Agile delivery, test cases should be written during sprint planning, not after development completes. When a user story is refined, the acceptance criteria define the conditions the feature must satisfy. Those acceptance criteria translate directly into test cases before a single line of code is written.

Writing test cases during refinement serves three purposes:

In mature Agile teams, test cases evolve into executable specifications. Rather than existing as separate documentation that someone must translate into automation, test cases are written in a format that can be directly executed by an AI-native platform or a BDD framework.

Virtuoso QA's natural language authoring removes the translation step entirely. A test case written in plain English during sprint planning is the same artefact that executes in the CI/CD pipeline after development completes. There is no separate automation phase and no risk of the automated test diverging from the documented test case.

Test cases decay in Agile environments when updates do not keep pace with product changes. A test case written in sprint three may be invalidated by a change in sprint seven. Without a clear process for identifying and updating affected test cases when requirements change, the test suite accumulates stale cases that either fail for the wrong reasons or pass despite the feature being broken.

Practical approaches to staying current:

Test cases feed CI/CD pipelines in tiers based on execution speed and scope:

This tiered approach ensures the most critical test cases provide feedback at the earliest possible point without making every commit wait for the full regression suite to complete.

Even experienced testers make mistakes that reduce test case effectiveness. Recognizing common pitfalls enables avoiding them.

"Test the login functionality" provides no guidance about what to actually do. Specific steps like "Enter username, enter password, click submit button" enable consistent execution.

Test cases specifying steps without expected results leave pass/fail determination to subjective judgment. Always document exactly what should happen at each critical step or at test completion.

Test cases validating five different aspects of functionality in one long test become difficult to maintain and debug. When such tests fail, identifying which of the five scenarios actually failed requires investigation. Separate test cases for distinct scenarios improve clarity.

Test cases assuming testers know implicit details create execution inconsistencies. Document everything explicitly: which environment to use, where to find test data, what browser to use, what user role to authenticate with.

Testing only happy paths misses many defects. Include boundary value testing, negative scenarios, concurrent operations, and system limits in comprehensive test coverage.

Test cases disconnected from requirements cannot prove coverage. Maintain clear links between test cases and the requirements, user stories, or acceptance criteria they validate.

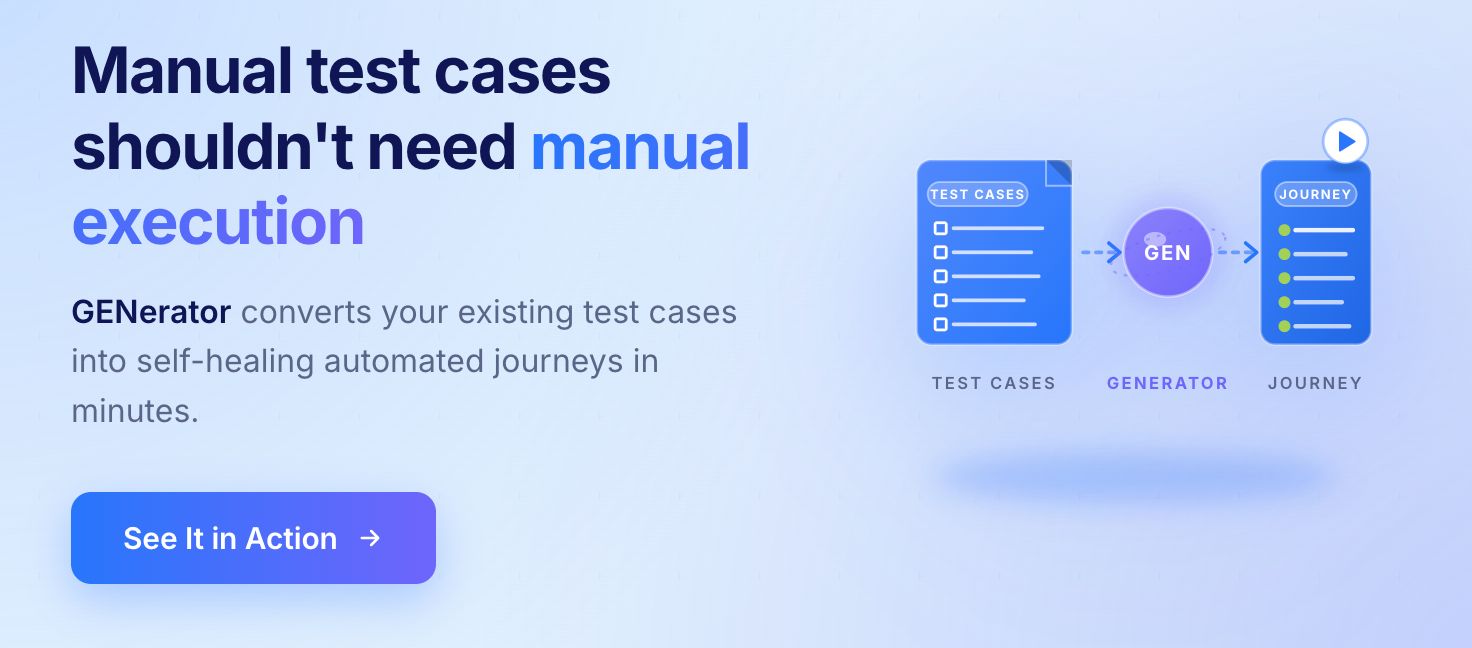

The greatest value of well-written manual test cases emerges when organizations convert them into automated tests, eliminating repetitive manual execution while preserving validation logic.

Historically, converting manual test cases to automation required specialized automation engineers to read test case documentation, write Selenium or similar framework code implementing each step, create test data management, build reporting infrastructure, and integrate with CI/CD pipelines. This process consumed weeks or months per test suite.

The conversion bottleneck meant most manual test cases never became automated. Organizations maintained two separate inventories: manual test cases executed by human testers, and automated tests covering a small subset of functionality. Keeping both in sync as applications evolved multiplied maintenance burden.

Modern test platforms like Virtuoso QA eliminate the manual conversion bottleneck through AI-powered test generation that directly converts manual test case documentation into executable automated tests.

Organizations input manual test case descriptions, steps, and expected results into GENerator. The platform analyzes natural language test documentation, understands test intent and workflow, identifies UI elements and interactions needed, creates executable test scenarios, generates assertions for expected results validation, and produces ready-to-run automated tests preserving all validation logic.

What traditionally required weeks of automation engineering completes in minutes through AI-powered conversion.

Rather than requiring manual test cases to be rewritten in special formats or scripts, AI native platforms accept test cases as written in plain English. The same documentation manual testers use to execute tests becomes input for automated test generation.

This natural language understanding means existing test case inventories become immediate candidates for automation without requiring testers to learn Gherkin syntax, write step definitions, or translate test cases into technical specifications for automation engineers.

Manual test cases represent years of accumulated testing knowledge: understanding of critical workflows, identification of edge cases requiring validation, recognition of areas prone to defects. Converting manual test cases to automation preserves this knowledge while eliminating repetitive execution.

Organizations retain the intellectual capital invested in test case creation while transforming execution from slow manual processes to fast automated validation.

After converting manual test cases to automated tests, maintenance becomes the critical concern. Traditional automation requires updating test code whenever applications change. AI native platforms with self-healing automatically adapt automated tests when UI elements move, workflows evolve, or page structures change.

Virtuoso QA's 95% self-healing accuracy means only 5% of application changes require human intervention to update automated tests. Organizations report 88% to 90% reduction in test maintenance effort, enabling teams to focus on expanding coverage rather than fixing broken tests.

Effective test case management requires appropriate tools and organizational practices beyond individual test case writing skills.

Test cases should be version controlled alongside application code. Changes to test case documentation are tracked, previous versions can be retrieved if needed, multiple testers can collaborate without conflicts, and test case evolution aligns with application releases.

Logical organization enables efficient test execution. Group test cases by module or feature area, priority level, testing type (smoke, regression, acceptance), and execution environment (dev, QA, staging).

Well-organized suites enable executing appropriate subsets for different scenarios: quick smoke tests after deployments, comprehensive regression before major releases, focused feature testing during development.

Regular analysis of test coverage identifies gaps requiring additional test cases. Which requirements lack test cases? Which application modules have insufficient validation? What edge cases remain untested?

Coverage analysis informs test case writing priorities, ensuring testing effort focuses on areas with highest quality risk.

Test case creation and management continues evolving as AI capabilities advance.

Next-generation platforms will analyze requirements and automatically generate comprehensive test case documentation without human authoring. Product owners write requirements, AI generates test cases covering functionality validation, edge cases, error handling, and integration points.

This autonomous generation dramatically accelerates test case creation while ensuring completeness that manual authoring might miss.

As applications evolve, test cases must be updated to reflect changes. AI systems will automatically update test case documentation when functionality changes, analyzing what changed in requirements or code, identifying affected test cases, updating steps and expected results to match new behavior, and preserving coverage for unchanged functionality.

AI will analyze test execution history to identify redundant test cases providing no additional coverage, flaky test cases requiring improvement or removal, high-value test cases detecting frequent defects, and optimal execution sequences minimizing total runtime. This intelligent optimization ensures test suites remain effective and efficient as they grow.

Virtuoso QA offers evaluation programs enabling teams to experience direct manual test case to automated test conversion using actual test documentation and applications. Proof of concepts demonstrate GENerator capabilities converting existing manual test cases to executable automation, natural language test creation enabling non-technical team members to contribute, and self-healing maintenance eliminating the update burden that plagues traditional automation.

Organizations seeking to eliminate manual testing bottlenecks while preserving test case documentation value should begin evaluation immediately.

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.