Should You Outsource Software Testing in 2026?

Explore the benefits, drawbacks, hidden costs of outsourcing software testing and why AI-native automation is a smarter alternative for enterprise teams.

Outsourcing software testing promises access to specialized expertise, reduced costs, and faster scaling. And for many organizations, it delivers on those promises, at least initially. But the outsourcing model carries structural limitations that become more visible as applications grow in complexity and release velocity increases. Knowledge transfer gaps, communication overhead, time zone friction, and vendor dependency create hidden costs that erode the initial savings.

This guide provides an honest analysis of when outsourcing makes sense, where it breaks down, and how AI native test automation is emerging as the third option that combines the scalability of outsourcing with the control and speed of in house teams.

Software testing outsourcing is the practice of contracting external companies or teams to perform quality assurance activities on your software applications. Instead of building and maintaining a full in house QA team, organizations delegate some or all testing responsibilities to a third party provider.

The outsourcing model ranges from staff augmentation (embedding external testers within your existing team) to fully managed testing services (where the provider takes end to end responsibility for quality assurance, including strategy, execution, and reporting).

Supplements your existing QA team with external testers who work under your direction. You retain control of the testing strategy, tools, and processes. The provider supplies skilled testers to fill capacity gaps.

Engages an external team for a specific project or release cycle. The provider works to defined deliverables within a fixed scope and timeline.

This model works for:

Transfers full responsibility for testing to the provider. They define the testing approach, select tools, manage execution, and deliver quality reporting. The client defines quality requirements and the provider determines how to meet them.

Establishes a dedicated testing team at the provider's location that serves your organisation exclusively.

This model combines:

Testing outsourcing providers maintain teams with diverse expertise across testing methodologies, tools, industries, and technologies. An in-house team of five testers cannot match the breadth of experience available through a provider that serves dozens of clients across multiple domains.

This is particularly valuable for specialised testing needs that do not justify a permanent hire, such as:

The most cited benefit of outsourcing is labour cost arbitrage.

Beyond direct labour savings, outsourcing eliminates overhead costs including:

For organisations with fluctuating testing demands, outsourcing converts fixed QA costs into variable costs that scale with actual need.

Building an in-house QA team takes months. The hiring and onboarding process for a major project launch can consume the entire ramp-up window. Outsourcing providers can:

Organisations whose competitive advantage lies in product development, not QA operations, benefit from outsourcing testing to specialists. This allows engineering leadership to:

Distributed outsourcing teams enable round-the-clock testing operations. When your development team finishes a sprint in New York, a testing team in Bangalore can execute overnight. By the time developers arrive the next morning, test results are ready for review. This follow-the-sun model can compress testing timelines significantly.

Every outsourced engagement begins with knowledge transfer, and it never truly ends. External teams need to understand your:

This knowledge transfer is time-consuming, often incomplete, and must be repeated every time team members rotate. For complex enterprise systems with deep business logic, such as insurance underwriting, financial transaction processing, or healthcare clinical workflows, the knowledge gap between an outsourced team and domain experts who have worked with the application for years can be significant.

Testing requires continuous communication between developers and testers. When a test fails, the tester needs context about recent changes. When a defect is found, the developer needs detailed reproduction steps and environment details. This communication is natural within a co-located team and becomes a process overhead with outsourced providers.

Time zone differences amplify this friction:

Outsourcing providers serve multiple clients simultaneously. Their best testers are typically assigned to the accounts that generate the most revenue or that are in the most critical phase. This creates several risks:

Over time, the outsourced team accumulates critical knowledge about your applications and testing infrastructure. This creates dependency:

Granting external teams access to your applications, test environments, and potentially production-adjacent data introduces security considerations. Industries with strict data handling requirements must ensure that outsourced testers operate within compliance boundaries. This often requires:

The contractual structure of outsourcing relationships can reduce organisational agility:

In rapidly evolving technology environments, this contractual overhead can slow the pace of quality improvement.

The most consequential drawback of outsourcing emerges when organisations outsource test automation development. When an external team builds your automation framework, a compounding problem develops.

This is why organisations increasingly recognise that the automation platform decision is too strategic to outsource. The right automation architecture can reduce the need for outsourced testing altogether.

The traditional outsourcing decision assumes a binary choice: build an expensive in house team or hire a cheaper external one. AI native test automation introduces a third path that changes the economics fundamentally.

The primary reason organizations outsource is insufficient testing capacity. AI native test platforms address this constraint directly. Enterprise customers have demonstrated 4X increases in testing capacity per tester after adopting AI native automation. An in house team of 10 testers operating on an AI native platform can deliver the output that previously required 40 testers on traditional tools.

This does not mean AI replaces testers. It means each tester can create, execute, and maintain dramatically more test coverage. Natural language test authoring eliminates the coding bottleneck. Self healing AI eliminates the maintenance burden that consumes 80% of traditional automation effort. Autonomous test generation through capabilities like Virtuoso QA's StepIQ accelerates coverage expansion without proportional effort.

When your own team builds tests in natural language (plain English descriptions of test scenarios rather than code), the knowledge embedded in those tests is accessible to everyone in your organization. A product owner can read and validate the test. A new team member can understand the test suite without learning a framework. Business logic captured in natural language tests becomes organizational knowledge rather than vendor knowledge.

Consider an organization that outsources 20 testers at an average fully loaded cost of $40,000 per year each. That is $800,000 annually in outsourcing costs, before accounting for knowledge transfer, management overhead, and communication friction.

The same organization investing in an AI native automation platform with a team of 5 in house testers (achieving 4X capacity through AI) can deliver equivalent or greater coverage. The total cost includes platform licensing plus 5 fully loaded in house testers. For most enterprise organizations, this configuration delivers lower total cost of ownership with significantly better quality outcomes, faster feedback loops, and zero knowledge transfer risk.

Enterprise implementations have demonstrated 30% to 40% overall QA cost reduction compared to traditional approaches, whether those traditional approaches are in house or outsourced.

AI native automation does not eliminate every outsourcing scenario. Outsourcing remains valuable for short term capacity surges during major implementations or migrations, specialized testing that requires domain expertise your team lacks and AI cannot replicate (such as deep ERP configuration validation during a first time implementation), geographic testing that requires testers in specific markets for localization and cultural validation, and exploratory testing where fresh perspectives from external testers identify issues that internal teams overlook through familiarity bias.

The strategic move is to outsource selectively for these specific scenarios while building AI native automation capability in house for ongoing regression, functional, and end to end testing.

Virtuoso QA is the AI-native test platform that makes the third option practical.

Enterprise teams using Virtuoso QA have reduced overall QA costs by 30 to 40 percent compared to outsourced testing, while achieving faster feedback cycles and higher coverage.

Most enterprise organizations benefit from a combination. Build AI native automation capability in house for core applications and ongoing testing. Engage outsourced specialists for specific initiatives, domain expertise, and exploratory testing.

Use the AI native platform as the shared infrastructure that both internal and external teams operate on, ensuring that all test assets remain in your organization regardless of who creates them.

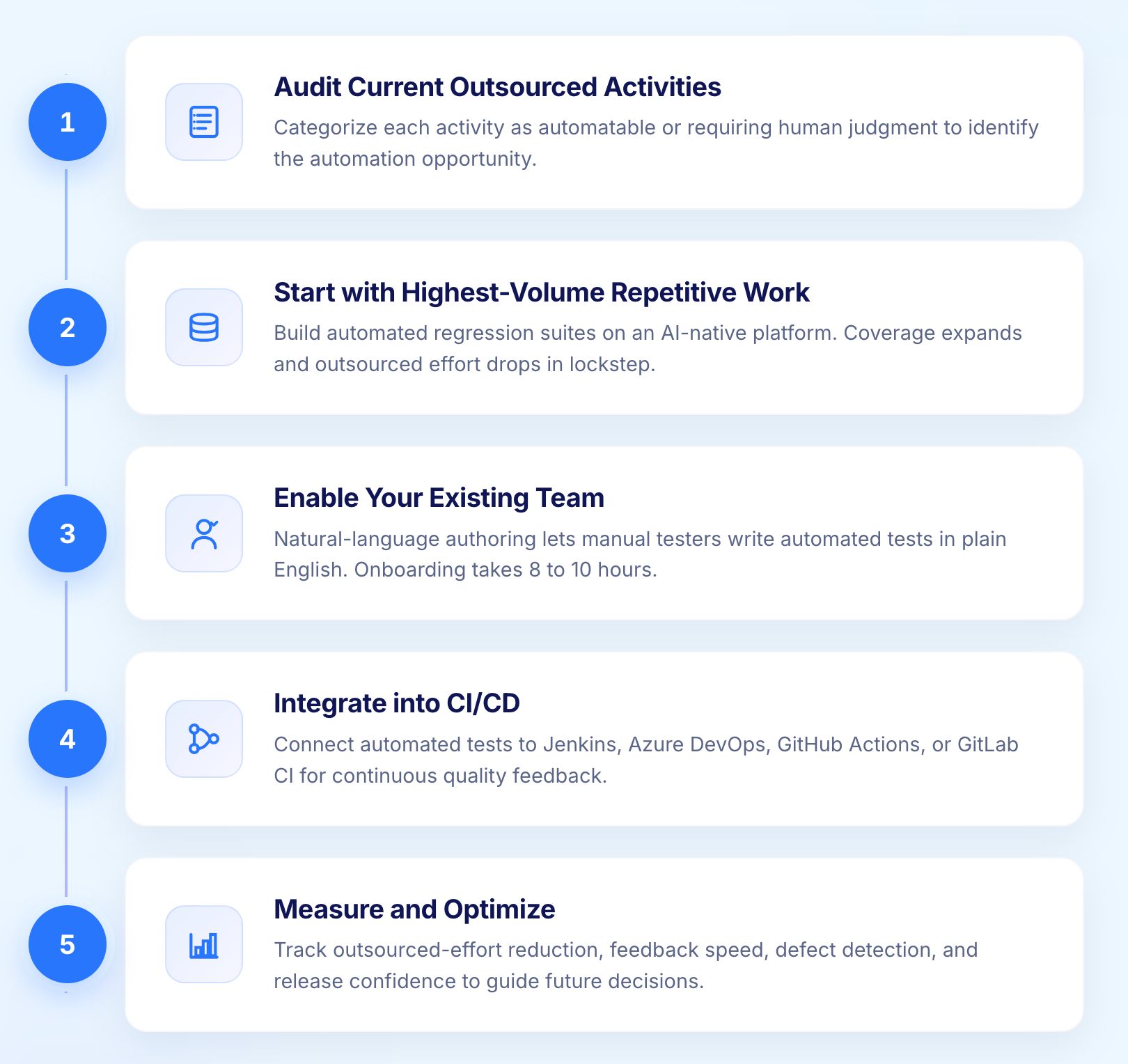

Categorize every outsourced testing activity as either automatable (regression, functional, cross browser, API validation) or requiring human judgment (exploratory, usability, localization cultural review). This audit identifies the automation opportunity.

Regression testing is typically the largest portion of outsourced testing effort and the most straightforward to automate. Begin by building automated regression suites on an AI native platform. As automated coverage expands, the need for outsourced regression testers decreases proportionally.

Natural language test authoring platforms eliminate the coding barrier that previously limited who could automate. Manual testers who currently rely on outsourced automation engineers can write their own automated tests in plain English. Typical onboarding to AI native platforms takes 8 to 10 hours, not weeks or months.

Connect automated tests to your CI/CD pipeline through native integrations with Jenkins, Azure DevOps, GitHub Actions, or GitLab CI. This creates continuous quality feedback that eliminates the batch testing model that outsourcing relationships often depend on.

Track the reduction in outsourced effort as automated coverage expands. Measure the improvement in feedback speed, defect detection rate, and release confidence. Use these metrics to make informed decisions about where outsourced testing still delivers value and where it can be replaced.

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.