See Virtuoso QA in Action - Try Interactive Demo

Risk based regression testing aligns testing effort with business impact, concentrating coverage on functionality where defects matter most.

Not all functionality carries equal risk. A defect in payment processing creates different consequences than a defect in help text formatting. Risk based regression testing aligns testing effort with business impact, concentrating coverage on functionality where defects matter most. This guide presents a comprehensive approach to assessing risk, prioritizing tests, and optimizing regression testing for maximum defect prevention where prevention matters most.

Risk based testing allocates testing effort according to risk rather than treating all functionality equally. The approach recognizes that testing resources are finite and that not all defects carry equal business consequence.

Risk combines two factors: probability of failure and impact of failure. High probability alone does not indicate high risk if impact is minimal. High impact alone does not indicate high risk if probability is negligible. Risk based testing addresses functionality where both probability and impact are significant.

Some functionality fails more often than others.

Understanding failure probability enables targeting tests where defects are likely.

Some failures matter more than others.

Understanding impact enables targeting tests where defects are consequential.

Traditional regression testing attempts exhaustive coverage. Run everything. Validate everything. Miss nothing. This approach sounds thorough but fails in practice.

Exhaustive testing is impossible. Time constraints force choices. Without explicit risk assessment, choices become arbitrary. Critical functionality receives the same attention as trivial functionality. Or worse, critical functionality receives less attention because it is complex and time consuming to test.

Risk based testing makes choices explicit. Teams consciously decide what to test thoroughly, what to test adequately, and what to test minimally. Decisions align with business priorities rather than convenience.

Effective risk-based testing requires systematic risk assessment before a single test is written or prioritised. The framework covers two dimensions: the impact of a defect reaching production, and the probability that a defect exists.

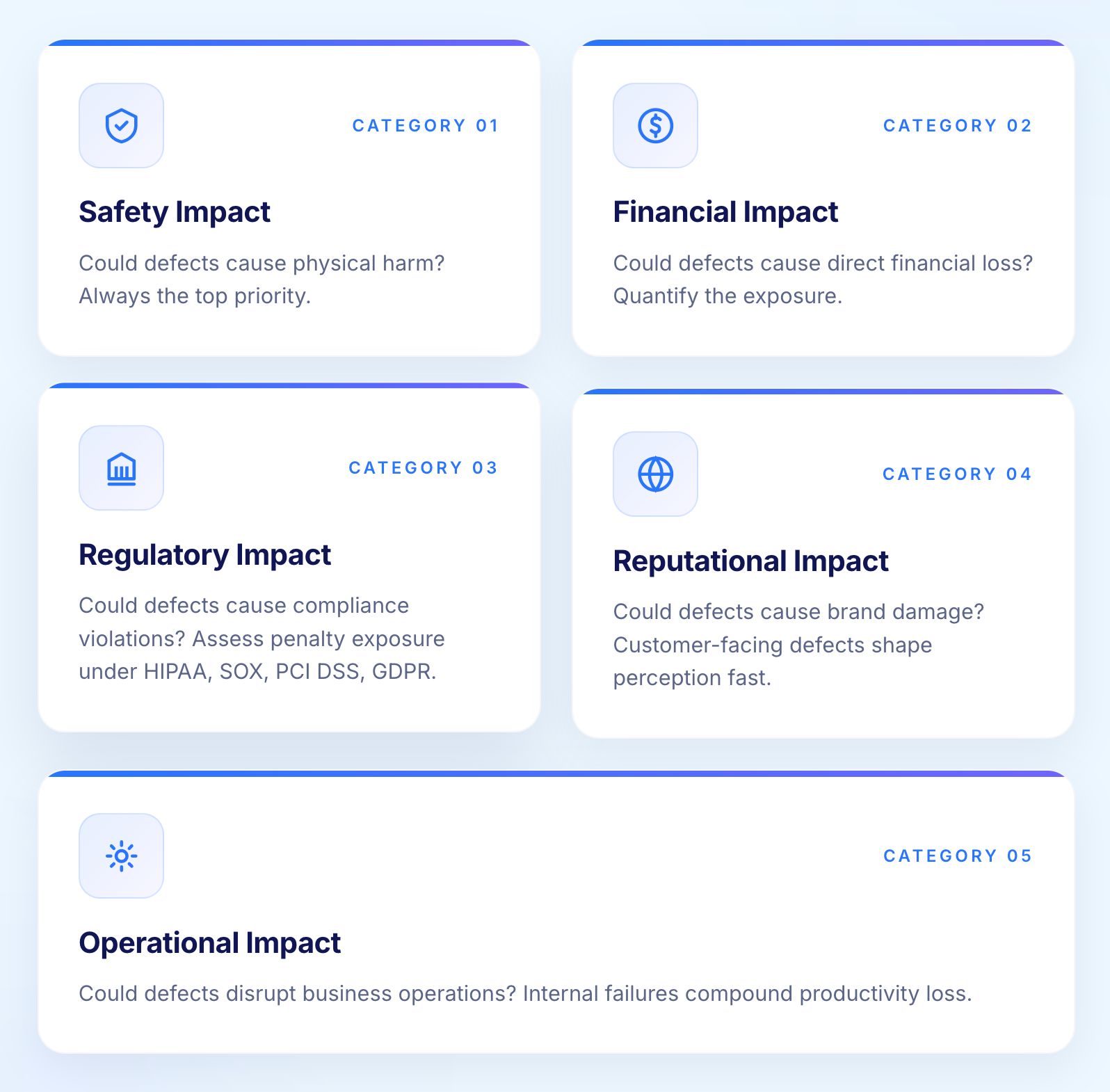

Impact assessment evaluates the consequences of defects reaching production across five categories.

Could defects cause physical harm? Medical applications, industrial controls, and transportation systems have safety implications. Safety impact takes priority over all other impact categories without exception.

Could defects cause direct financial loss? Payment processing, trading systems, and billing applications have immediate financial impact. Quantify the potential loss magnitude for each area under assessment.

Could defects cause compliance violations? Healthcare (HIPAA), finance (SOX, PCI DSS), and data protection (GDPR) regulations impose significant consequences for violations. Assess penalty exposure specifically, not just the existence of a regulation.

Could defects cause brand damage? Customer-facing defects visible to many users create perception problems even when technical impact is limited. High-traffic pages and core user journeys carry elevated reputational risk.

Could defects disrupt business operations? Internal system failures may not affect customers directly but prevent employees from working effectively, creating productivity losses that compound over time.

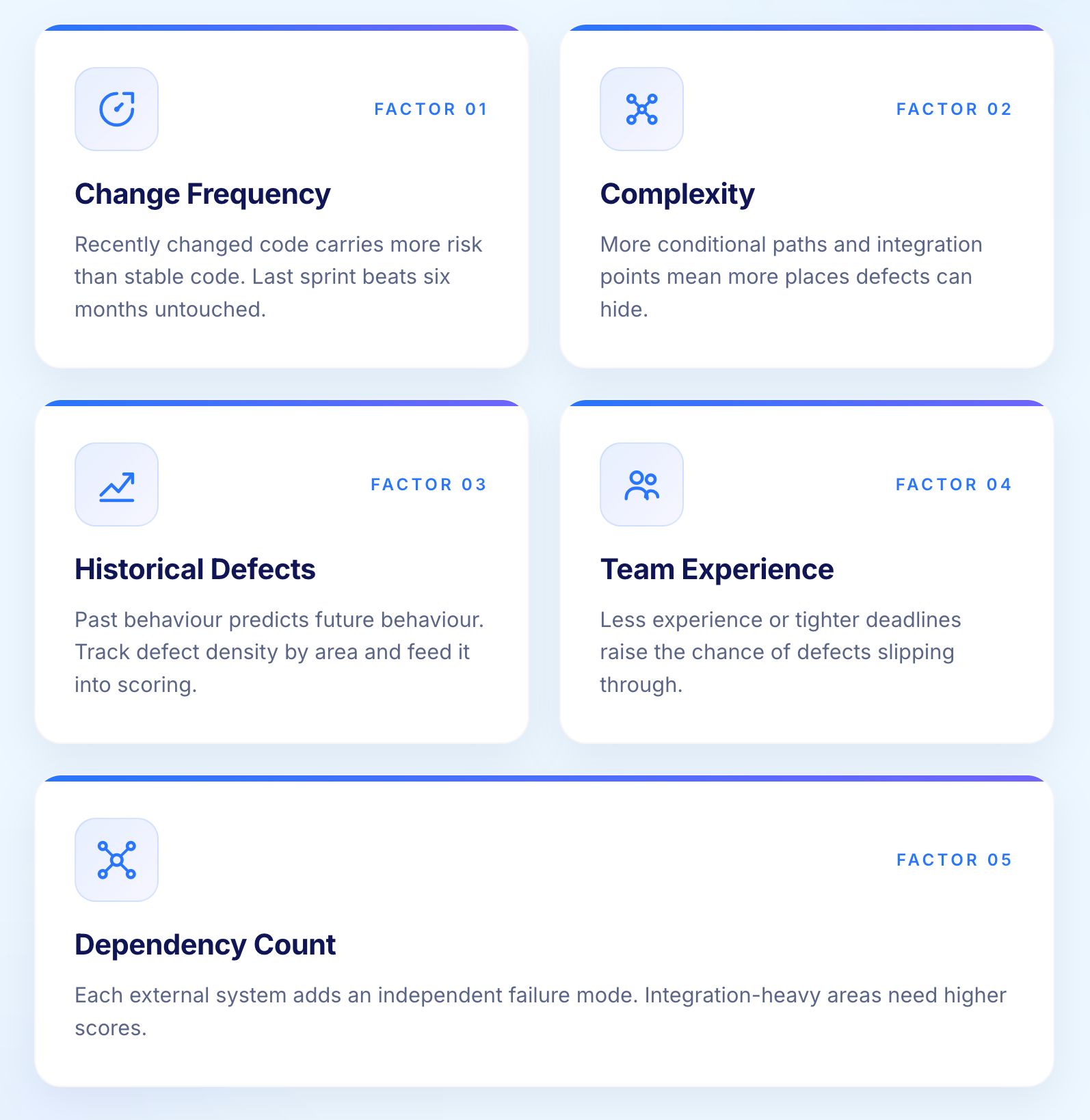

Probability assessment evaluates the likelihood that defects exist in a given area of the application.

Recently changed functionality has higher defect probability than stable functionality. Assess how recently and how extensively each area changed. Code touched in the last sprint carries higher risk than code unchanged for six months.

Complex functionality with many conditional paths, integration points, and edge cases has higher defect probability than simple functionality. Cyclomatic complexity is a useful proxy when code-level data is available.

Areas with historical defect concentrations likely continue producing defects. Past behaviour predicts future behaviour for code quality. Track defect density by functional area and use it as a direct input to probability scoring.

Functionality developed by less experienced team members or under time pressure has higher defect probability than functionality developed by experienced teams with adequate time and review.

Functionality depending on many external systems has higher defect probability because each dependency introduces an independent failure mode. Integration-heavy areas deserve elevated probability scores.

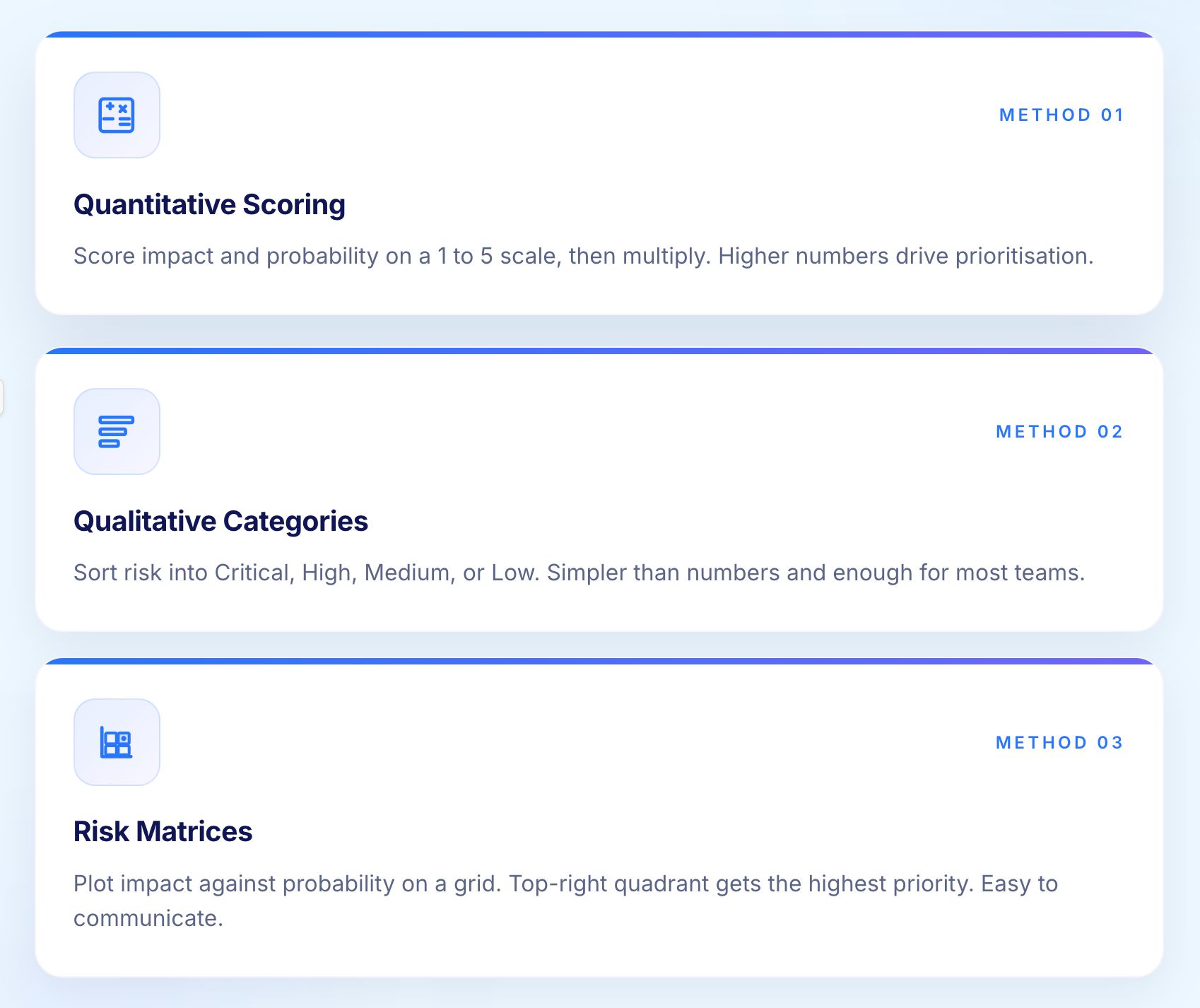

Three approaches to combining impact and probability into actionable risk scores.

Assign numeric values to impact (1 to 5 scale for each impact category) and probability (1 to 5 scale). Calculate risk as impact multiplied by probability. Higher scores indicate higher risk and drive test prioritisation decisions.

Categorise risk as Critical, High, Medium, or Low based on combined assessment. Simpler than numeric scoring and sufficient for most organisations. The important thing is that the categorisation is consistent and documented.

Plot functionality on matrices with impact on one axis and probability on the other. Quadrants indicate risk levels: high impact combined with high probability produces the highest risk. Risk matrices make the assessment visible and easy to communicate to stakeholders.

Risk assessment is only valuable if it directly shapes what gets tested, how thoroughly, and how often. Three dimensions translate risk scores into concrete testing choices that teams can implement immediately.

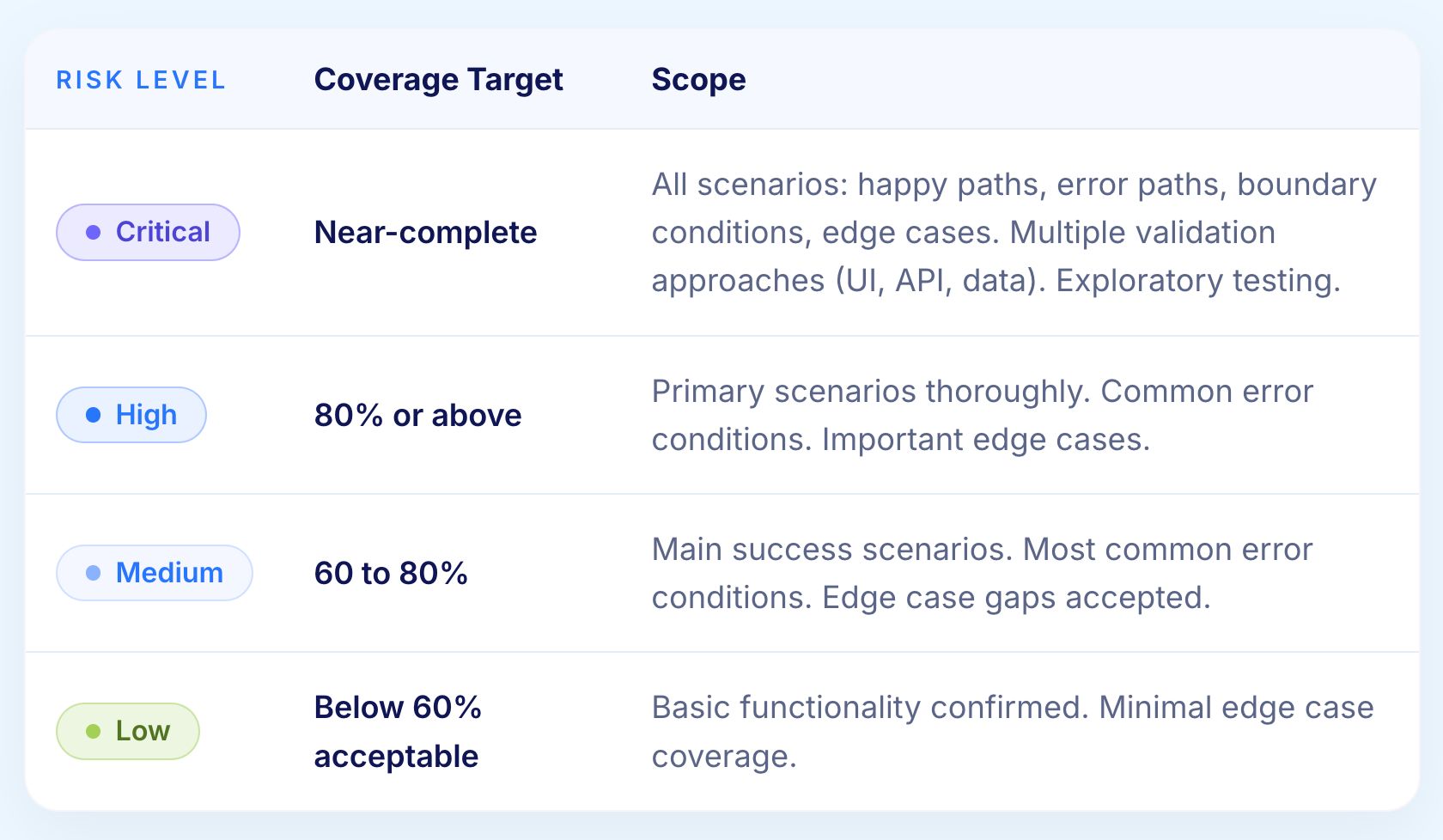

Functionality in the critical risk category receives maximum testing attention. Tests execute on every build. Multiple test scenarios cover varied conditions. Senior testers review results. Any failures block releases without exception.

In practice, this means a 2,000-test suite does not treat Priority 1, Priority 2, and Priority 3 tests as equally discretionary. Critical functionality always receives full validation regardless of time pressure.

High-risk functionality receives thorough testing. Tests execute on major releases and integration milestones. Coverage includes primary scenarios and common edge cases. Failures require investigation before release.

Medium-risk functionality receives adequate testing. Tests execute periodically, typically weekly or monthly. Coverage focuses on primary scenarios. Failures require assessment but may not block releases depending on severity.

Low-risk functionality receives minimal testing. Tests execute infrequently, quarterly or before major releases. Coverage confirms basic operation. Failures are logged but may be accepted for release when impact assessment supports that decision.

Risk level determines not just how often tests run but how comprehensively they cover each functional area.

Execute tests with frequency matching risk level.

Run on every code commit or at minimum every build. Catching critical defects early prevents expensive late discovery. Fast feedback enables immediate remediation.

Run on every release and significant integration points. Daily or nightly execution catches defects before they compound.

Run weekly or per release cycle. Less frequent execution balances coverage against execution burden.

Run monthly or quarterly. Infrequent execution confirms ongoing operation without consuming regular execution capacity.

Risk assessment informs which tests to run. Risk-based test design determines what those tests validate and how they are structured to catch the most consequential defects.

Identify the paths through applications that users traverse most frequently and that carry the highest business impact. Ensure comprehensive testing covers these paths thoroughly, not just their happy-path versions.

Design tests specifically targeting likely failure modes. If integration failures pose high risk, design tests validating integration robustness under realistic failure conditions rather than only testing the success path.

For high-risk functionality, test not just normal operation but recovery from failures. Can the system recover from database timeouts, network interruptions, and service unavailability? Recovery failures often carry higher impact than the original failure.

What tests assert matters as much as what they exercise.

Verify that calculations, transformations, and decisions follow business rules. Business rule violations often create higher impact than technical failures and are more likely to reach production undetected.

Verify that data remains accurate and consistent throughout operations. Data corruption may persist and compound, creating long-term damage that is difficult and expensive to remediate.

For functionality with security implications, verify that access controls, encryption, and audit logging function correctly. Security assertions belong in functional test suites, not only in dedicated security testing.

Risk is not static. A risk assessment conducted at the start of a project becomes progressively less accurate as the application evolves. Dynamic risk assessment keeps the model current.

Risk assessments should update in response to specific events rather than on a fixed calendar alone. Three triggers cover the situations where risk profiles change most significantly.

When code changes, reassess risk for affected functionality. A stable low-risk area becomes higher risk after significant modification. The change itself is a risk signal, not just the functional outcome of the change.

When production incidents occur, reassess risk for the involved functionality. Incidents reveal risk that the assessment underestimated. Every production defect is direct feedback on the accuracy of the risk model and should trigger a review of the relevant risk scores.

Schedule regular risk review independent of specific changes or incidents. Quarterly reviews catch risk drift from gradual changes that do not trigger change-based or incident-based reassessment individually but collectively shift the risk profile meaningfully.

Manual risk assessment cannot keep pace with continuous delivery. Automating the collection of risk indicators makes dynamic assessment practical at scale without requiring a dedicated analyst to monitor every change.

Track code change frequency automatically by functional area. Accelerating change indicates increasing risk and should trigger elevated test coverage without requiring manual assessment decisions for each commit.

Track defect discovery rates by functional area over time. Rising defect rates indicate increasing risk. Declining rates may indicate improving quality or, more worryingly, insufficient testing coverage that is failing to surface existing defects.

Track test pass rates over time by functional area. Declining pass rates may indicate increasing instability before that instability manifests as production defects that reach users.

AI identifies risk patterns that manual assessment consistently misses, particularly in large codebases where the signal-to-noise ratio makes pattern recognition difficult for humans working with limited time.

Machine learning models trained on historical defect data predict which code areas will produce defects. These predictions add a forward-looking dimension to risk assessment that historical analysis alone cannot provide.

AI analysing code changes predicts impact scope more accurately than static dependency analysis. A small change affecting critical business logic carries different risk than a large change affecting comments or variable names. Semantic analysis catches this distinction automatically without requiring manual review of every change.

Machine learning identifies subtle patterns correlating with defects across codebases, teams, and release cycles. These patterns become additional risk factors in automated assessment, surfacing risk that no individual reviewer would identify working manually.

Enterprise organisations face risk-based testing considerations that do not arise at smaller scale. Portfolio complexity, regulatory obligations, and execution infrastructure all require specific approaches that go beyond what works for a single application team.

Large organisations have many applications with interdependencies. Risk assessment cannot treat each application in isolation as though it exists independently of everything around it.

Not all applications carry equal business importance. Core revenue-generating applications warrant more testing investment than supporting utilities. Explicit criticality rankings prevent testing effort from concentrating on familiar applications while neglecting critical but complex ones that teams find harder to test.

Applications depending on other applications inherit risk. A low-risk application depending on a high-risk service may itself become high-risk during periods when the upstream service is changing rapidly. Dependency chain analysis prevents teams from treating inherited risk as someone else's problem.

Track coverage distribution across applications to identify gaps before they result in production incidents. The goal is not equal coverage across all applications but appropriate coverage proportional to each application's risk profile and business criticality.

Regulated industries have specific risk requirements that must be incorporated into the risk assessment framework rather than treated as a separate compliance activity managed by a different team.

Regulations may mandate testing for specific functionality. HIPAA requires testing of healthcare data handling. PCI DSS requires testing of payment card processing. SOX requires testing of financial controls. These mandates translate directly into minimum testing requirements regardless of probability scores. A low-probability area with a regulatory mandate still requires testing.

Risk-based testing must produce documentation supporting audit requirements. Test results, coverage reports, and risk assessments become audit artefacts. Structure documentation with audit requirements in mind from the start rather than retrofitting documentation to meet audit requests after the fact.

Regulatory violations create financial and operational penalties that must be factored explicitly into impact assessment. The financial exposure from a GDPR violation may dwarf the direct business impact of the defect itself, making regulatory impact one of the most significant factors in the risk equation.

Enterprise-scale execution requires infrastructure and coordination that goes beyond what individual teams typically manage. Two capabilities make the difference between risk-based testing that works in theory and risk-based testing that works in practice at enterprise scale.

Enterprise workflows span multiple applications. Risk-based testing must cover cross-application integration, not just individual application functionality. A workflow spanning CRM, ERP, and billing requires end-to-end validation that treats the integrated workflow as the unit of risk, not each application independently.

Enterprise test suites may contain tens of thousands of tests. Parallel execution across 100 or more concurrent test environments transforms execution economics entirely. Organisations executing 100,000 annual regression runs via CI/CD demonstrate what becomes possible when execution scales to meet enterprise needs. Risk-based prioritisation combined with parallelisation ensures critical functionality receives full validation without time constraints forcing coverage compromises.

AI improves risk-based testing at every stage: assessment, test selection, execution, and failure analysis.

AI brings capabilities to risk assessment that manual processes cannot replicate at scale. Semantic change analysis identifies that a small change affecting critical business logic carries different risk than a large change affecting comments, without manual review of every commit.

Historical pattern matching surfaces elevated risk in code structurally similar to past defect sources. Cross-reference analysis correlates code changes, test results, and production incidents simultaneously, producing risk profiles more accurate than any single data source provides alone.

Rather than running tests in a fixed order, AI selects tests that maximise risk coverage within available time. Selection updates automatically as code changes shift risk profiles. Tests that catch defects receive higher selection weight over time while tests that consistently pass without catching anything receive lower weight, improving efficiency without manual curation.

Flaky tests corrupt risk assessment data. A test failing intermittently for environmental reasons produces false risk signals that mislead prioritisation decisions. Self-healing keeps tests as reliable indicators of actual application risk.

When tests fail, the critical question is whether the failure indicates a genuine production defect or a test environment issue. AI analysing failure patterns, error codes, and environmental factors resolves this faster.

Risk-based testing depends on three things working reliably: tests that stay current as the application changes, failures that are diagnosed quickly, and execution that scales to match the risk coverage required. Virtuoso QA addresses all three.

The result is a regression programme where effort concentrates where risk is highest, maintenance does not consume the capacity needed to expand coverage, and failures get resolved while context is still fresh.

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.