See Virtuoso QA in Action - Try Interactive Demo

Agile test automation done right eliminates the testing bottleneck. Learn sprint-by-sprint implementation, CI/CD integration, and shift-left strategies.

Agile test automation is the practice of embedding automated testing directly into agile sprint workflows so that quality verification happens continuously, not as a phase at the end. When done right, it eliminates the testing bottleneck that forces teams to choose between shipping fast and shipping safely. When done wrong, it creates a secondary maintenance burden that slows teams down more than manual testing ever did. This guide covers the practical how: what to automate, when to automate it, how to structure automation within sprints, and how AI native platforms make the entire process faster and more sustainable.

Agile test automation is the integration of automated testing into agile development workflows, where tests are created, executed, and maintained in rhythm with iterative sprint cycles. Unlike traditional automation approaches where tests are built after development is complete, agile test automation happens concurrently with development.

The core principle is simple: every sprint that delivers working software should also deliver the automated tests that verify that software. Test automation is not a separate project. It is part of the definition of done for every user story.

This matters because agile development only works when feedback is fast. If a team delivers a feature in a two week sprint but needs another two weeks to test it, the sprint cadence becomes meaningless. Agile test automation closes that loop by providing immediate, automated feedback on whether new code works correctly and whether it has broken anything that worked before.

The shift is cultural as much as it is technical. Agile test automation requires QA to operate as part of the development team, not as a separate function that receives hand offs. Testers participate in sprint planning, contribute to user story refinement, and begin test design before a single line of code is written.

Traditional test automation was designed for waterfall development. Build the application, then build the tests, then run the tests. This sequential approach collapses when applied to agile sprints for several practical reasons.

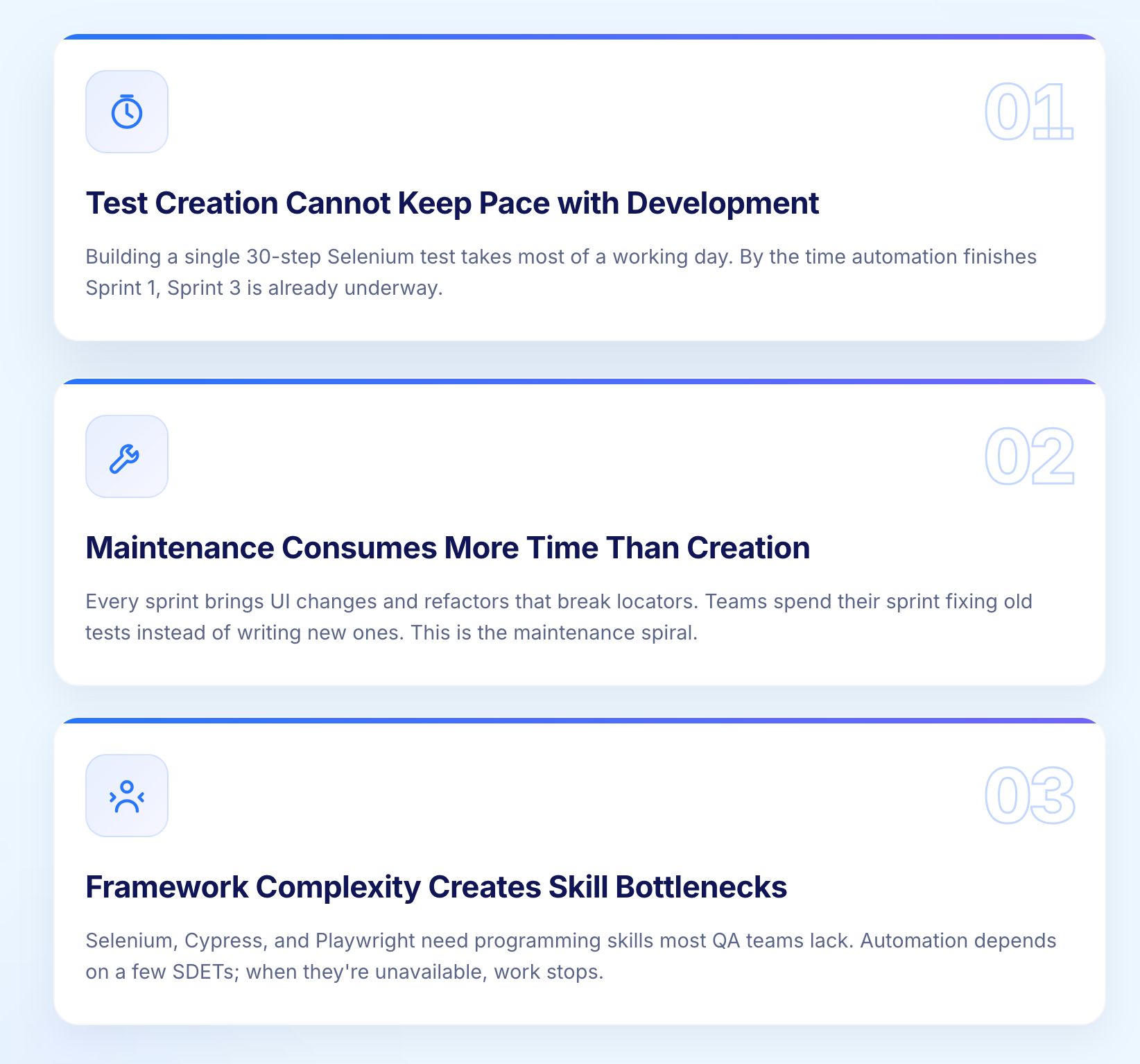

In traditional automation using frameworks like Selenium, building a single 30 step automated test takes 8 to 12 hours. A typical sprint delivers 5 to 10 user stories, each requiring multiple test scenarios. The math does not work. By the time automation catches up with Sprint 1, Sprint 3 is already underway.

This gap creates a growing backlog of unautomated functionality that either gets tested manually (slow, inconsistent) or does not get tested at all (risky). Neither outcome is acceptable in a delivery model that ships incrementally every two weeks.

Research consistently shows that teams using framework based automation spend approximately 80% of their time on test maintenance and only 10% on authoring new tests. In agile, where the application changes every sprint, this ratio is devastating.

Every sprint introduces UI changes, new features, and refactored components that break existing test locators and assertions. The automation team spends most of their sprint fixing broken tests from previous sprints instead of creating tests for current work. This is the maintenance spiral that causes 73% of test automation projects to fail to deliver ROI.

Selenium, Cypress, and Playwright require programming skills that many QA professionals do not have. This creates a dependency on a small number of SDETs (Software Development Engineers in Test) who become bottlenecks. When the SDET is sick, on vacation, or overloaded, automation stops.

Agile demands that the entire QA team contributes to automation. Any approach that limits automation to specialists is structurally incompatible with agile's collaborative, cross functional model.

The test automation pyramid, originally articulated by Mike Cohn and later expanded by practitioners like Martin Fowler, provides the architectural framework for agile test automation. It defines three layers, each with a different purpose, speed, and maintenance profile.

Unit tests form the broad base of the pyramid. They test individual functions, methods, and classes in isolation. Unit tests execute in milliseconds, provide immediate feedback to developers, and are the cheapest to create and maintain.

In agile, developers write unit tests as part of their coding workflow, often using test driven development (TDD) practices. Every user story should include unit test coverage as part of the definition of done. Unit test failures detected within seconds of a code commit enable immediate correction before defects compound.

Integration tests verify that components work correctly together. API tests validate that backend services return correct data, handle errors appropriately, and maintain contracts with consuming applications.

This middle layer is critical for enterprise applications where business logic spans multiple services and modules. An order processing system might involve inventory APIs, pricing engines, tax calculation services, and payment gateways. Integration tests confirm that these components collaborate correctly before any UI testing begins.

API testing within agile sprints should be automated and integrated into CI/CD pipelines. Unified functional testing platform like Virtuoso QA that combine API calls with UI validation in a single test journey provide comprehensive coverage without maintaining separate API and UI test suites.

End to end tests validate complete user workflows through the application's interface. They confirm that the entire system, from UI through business logic to database, works correctly from the user's perspective.

This layer sits at the top of the pyramid because end to end tests are the slowest to execute, the most expensive to maintain, and the most prone to flakiness. They are also the most valuable for catching integration defects and user experience issues that lower level tests cannot detect.

In agile, end to end tests should focus on critical business paths rather than attempting exhaustive coverage. A retail application needs automated end to end tests for the purchase flow, account creation, and order tracking. It does not need end to end tests for every possible product filter combination.

Include test automation effort in user story estimation. If a story requires three days of development, it also requires test design and automation time. Stories that are marked "done" without corresponding automated tests create technical debt that accumulates exponentially.

Identify which user stories require new automated tests, which require updates to existing tests, and which are covered by existing automation. This assessment prevents duplicate effort and focuses automation resources on genuine coverage gaps.

Prioritize automation based on business risk and regression value. A payment processing flow that executes thousands of times daily deserves comprehensive automation. An admin settings page accessed weekly by two people does not.

Begin test design as soon as user story acceptance criteria are finalized. Testers do not need to wait for a working build to design test scenarios. In many cases, tests can be written against acceptance criteria and stubbed APIs before the UI is available.

Virtuoso QA with StepIQ can generate test steps autonomously by analyzing the application under test. This means test creation begins the moment a build is available, without the manual step by step authoring that traditional frameworks require. StepIQ accelerates test authoring by 90%, reducing a 30 step test from 8 to 12 hours to approximately 45 minutes.

Execute tests continuously as builds become available. Automated tests running in CI/CD pipelines provide immediate feedback on every commit. Developers learn within minutes whether their changes introduced regressions, enabling same day resolution rather than end of sprint firefighting.

Use Live Authoring to create and debug tests in real time. Instead of the traditional write, run, debug, repeat cycle, Live Authoring executes each test step as you write it. Immediate visual feedback eliminates guesswork and accelerates the path from test design to working automation.

Report automation coverage alongside functional delivery. If the team delivered 8 user stories, how many have corresponding automated tests? What is the overall regression test pass rate? What is the automation coverage percentage?

Demonstrate automated tests as part of the sprint demo. Stakeholders should see that quality verification is built into the delivery process, not appended after it. This builds confidence in the team's ability to ship safely at sprint velocity.

Review automation metrics: new tests created, tests broken and repaired, flake rate, execution time trends, and maintenance hours consumed. If maintenance hours are growing faster than new test creation, the automation approach needs re-evaluation.

Discuss automation blockers. Are there application areas that are particularly difficult to automate? Are there skill gaps on the team? Are environment instabilities causing false failures? Address these systematically to prevent automation from becoming a drag on sprint velocity.

Agile test automation without CI/CD integration is manual testing with extra steps. The real power of automation emerges when tests execute automatically with every code change, providing continuous quality feedback without human triggering.

Structure your pipeline to match the test pyramid. Unit tests run first because they execute fastest and catch the most common defects. If unit tests pass, integration and API tests execute next. If those pass, end to end UI tests run last.

This sequential approach means failures are caught at the cheapest level first. A unit test failure stops the pipeline in seconds. An end to end failure takes minutes to detect. Running all tests in parallel without sequencing wastes pipeline resources on end to end tests when a unit test would have caught the defect immediately.

AI native test automation platforms integrate directly with enterprise CI/CD tools including Jenkins, Azure DevOps, GitHub Actions, GitLab, CircleCI, and Bamboo. Tests can be triggered by code commits, pull requests, scheduled runs, or manual invocation through the pipeline.

Enterprise customers executing 100,000+ tests annually via CI/CD integration report over 90% reduction in effort compared to manual testing approaches. The combination of automated triggering, parallel execution, and AI powered analysis transforms testing from a sprint bottleneck into a sprint accelerator.

Agile sprints demand fast feedback. A regression suite that takes 8 hours to run is not providing agile feedback. It is providing overnight feedback, which is fundamentally different.

Cloud based parallel execution runs 100+ tests concurrently across 2000+ browser and device combinations. Regression suites that previously took hours can complete in minutes, enabling multiple test cycles per day instead of one cycle per night.

Defects found in production cost up to 30 times more to fix than those caught during development. Every stage a defect travels through the delivery cycle multiplies its cost. Shift-left is not a philosophy. It is financial logic.

The reason most teams have not achieved genuine shift-left is not intent. It is tooling. Traditional frameworks require a working build before a single test can be created. That dependency pushes testing right by default.

Modern AI-native platforms decouple test authoring from application availability. Tests can be written against acceptance criteria, wireframes, and stubbed APIs before development begins. When the build is ready, those tests execute immediately with no rework required.

This removes the most common cause of testing falling behind: waiting. Teams that start test design at story definition rather than story completion consistently enter UAT with full automated coverage already in place.

In-sprint automation means every feature delivered in a sprint has corresponding automated tests created within that same sprint. It prevents the accumulating backlog of unautomated functionality that compounds into permanent test debt.

Platforms like Virtuoso QA make this achievable at real sprint velocity. Features like autonomous test step generation and live authoring cut the cycle from acceptance criteria to working automated test from days to hours.

With traditional frameworks, the math does not support shift-left. Two engineers who each take a day to build one test cannot cover a 10-story sprint and maintain an existing suite simultaneously.

The 10x authoring speed that AI-native platforms deliver changes that equation. The same team can cover the full sprint backlog and retain capacity for exploratory and risk-based testing. Shift-left becomes standard practice rather than a quarterly goal.

The biggest threat to agile test automation is maintenance consuming all available time. Every sprint changes the application, and every change risks breaking existing tests. Traditional frameworks compound this problem because they identify elements using brittle locators that break whenever the UI evolves.

AI native self healing addresses this architecturally. Instead of relying on a single CSS selector or XPath that breaks with every UI change, AI augmented object identification builds comprehensive models of each element using all available DOM attributes, visible text, position, and structural context. When individual attributes change, the platform adapts automatically.

Not every QA professional can write Selenium code. Agile requires the entire team to contribute to automation, not just the two SDETs.

Natural Language Programming eliminates the coding barrier entirely. Testers write tests in plain English: "Navigate to the login page, type 'user@example.com' into the 'Email' field, click 'Sign In', verify 'Dashboard' appears on page." The platform translates these natural language instructions into executable automation.

This is not a compromise on test quality. Natural Language Programming handles dynamic data, API calls, iframes, Shadow DOM, and complex enterprise UI patterns. It simply makes these capabilities accessible to the entire QA team rather than restricting them to developers.

Organizations that democratize automation across the QA team report 4X increases in tester capacity. When every tester can automate, coverage expands proportionally without hiring additional specialists.

Flaky tests that pass sometimes and fail sometimes are the fastest way to destroy team confidence in automation. When developers learn to ignore test failures because "it's probably just a flaky test," the automation suite stops providing value entirely.

Flakiness in traditional frameworks primarily comes from timing issues (race conditions with asynchronous UI elements) and brittle locators (elements that match intermittently depending on render order). Both causes are architectural limitations of the locator based testing model.

AI native platforms address flakiness through intelligent synchronization (understanding page state rather than guessing with explicit waits) and multi signal element identification (matching elements based on comprehensive models rather than single locators). AI Root Cause Analysis further helps by automatically identifying whether a test failure represents a genuine application defect or a test infrastructure issue, enabling the team to focus on real problems.

In agile, requirements evolve every sprint. User stories are refined, acceptance criteria change, and features are reprioritized. Test suites must evolve in parallel or they become irrelevant.

Composable testing architectures address this by building tests from reusable, modular components. When a shared checkout flow changes, you update the checkout component once, and every test that uses it inherits the change automatically. This is fundamentally more maintainable than updating individual test scripts across hundreds of tests.

GENerator, Virtuoso QA's agentic test generation capability, can analyze existing test assets, requirements documents, and application structure to generate comprehensive test suites. When requirements change, GENerator can rapidly produce updated test coverage without starting from scratch.

AI in software testing is not new. What has changed is how deeply it is embedded. There is a meaningful difference between a platform built on AI from the ground up and a legacy tool with an AI feature added to the surface. One changes the economics of testing. The other changes the colour of the button.

Large language models now contribute at every stage of the testing lifecycle, not just test creation.

At the authoring stage, they interpret plain English instructions and convert them into structured, executable test logic. At the generation stage, they analyse existing assets - legacy scripts, manual test cases, requirements documents, UI screens, and convert them into modern automated tests. At the analysis stage, they categorise failures automatically, separating real application defects from environment noise. At the maintenance stage, they detect UI changes and update tests without human input.

An AI add-on sits on top of a scripted foundation. Locators still exist. Manual element identification still exists. When the application changes significantly, broken tests still need manual repair. The AI layer reduces some friction but does not remove the structural problem.

An AI-native platform removes the scripted foundation entirely. There are no locators to maintain because the identification model was never built on them. The entire system operates through AI, which means it adapts rather than breaks when the application evolves.

Agentic AI takes automation a step further. Rather than assisting a human through each phase, it operates across the full test lifecycle with minimal intervention, analysing applications, generating coverage, mapping assets into reusable components, and executing tests autonomously.

Virtuoso QA's GENerator is a practical example. It converts legacy Selenium scripts, requirements documents, and UI screens into fully executable modern tests, turning months of migration work into days. Teams spend less time building and more time on the testing that requires human judgement.

Enterprise teams in regulated industries such as financial services, healthcare, insurance, public sector need to know exactly what was tested and why AI-generated tests must be auditable, not opaque.

The best AI-native platforms address this by keeping all tests human-readable. Every test step exists in plain language that any team member can review. Failure reports include structured evidence: screenshots, logs, and step-by-step detail. Full traceability from requirements to test results is maintained throughout.

Track these metrics to verify that automation is accelerating your agile process rather than hindering it:

Try Virtuoso QA in Action

See how Virtuoso QA transforms plain English into fully executable tests within seconds.